19 Aug 2019

CloudFront is CDN service offerred by Amazon. I will not go into detail of what CDN is, there should be a lot of resources on web describing what it is and reader should read them. In short, it’s one or more servers distributed accross the globe which cache content so users can reach data with least network hops. In terms of CloudFront, these servers are called Edge Locations. There are many more Edge locations than Regions or Zones.

CloudFront provides more functionality than a typical CDN. For example, being an Amazon product, it’s integrated well with other services such as S3, ELB, EC2. It can act as transfer accelerator for file uploads. So if somebody is in Australia and wants to upload a file for service hosted in Virginia, they can upload it to Sydney Edge location which will then route that request on optimal network path to Virginia at much faster speed. You can also use Lambda@Edge to run custom code closer to your users and customize user experience. It can also be used for DDoS mitigation with AWS Shield.

Cached content is available on edge location till TTL (Time to live) but can be cleared before TTL. There are charges for requesting removal of cached content before TTL.

Here are some terms that we should be faimilar with:

Origin

Origin is location where your primary content is available. This may be your S3 bucket, EC2 instance, Route 53, Elastic Load Balancer or third party hosted service. When a request is sent to edge location, it fetches content from origin then serves it. On later requests, request is directly served from cached content at edge location. You can also setup redundant origins so if one origin is not available, requests can fallback to another origin.

Distribution

Using AWS console or API calls, we can register S3 bucket as origin for static files and EC2 instance as origin for dynamic content. We can also define time to live (TTL) and other rules. Once done, we create a “distribution”. Distribution can consist of selected edge locations or all of Amazon’s available edge locations for caching. Distribution can be of two types. One serves standard website content like images or other static files. Other is RTMP, which serves video or audio streams. Once distribution is created, we get a URL such as “abc123.cloudfront.net”. This is called distribution domain name.

Additional Resources:

18 Aug 2019

AWS Snowmobile and Snowball are craziest AWS services that I know of. Sometimes it’s cheaper and faster for people to just carry your data from your premises to AWS data center. Amazon Snowball and Snowmobile address exactly that.

Snowball is a device which Amazon sends you by mail. It’s capable of storing petabytes of data. You attach this device to your data center, transfer all your data to device and mail it back to Amazon. Amazon attaches this device to their network and transfer it to S3 for you. This is much faster and cheaper than uploading Terabytes of data via internet. For example, uploading 100 TB of data will take more than 100 days to transfer on dedicated 100 MB connection. That same transfer can be accomplished with Snowmobile within a week. Bandwidth costs of large transfer can also easily expand to thousands of dollars. Snowmobile enables that transfer at 1/5th of the original cost. Refer to (Amazon Snowball)(https://aws.amazon.com/snowball/) page for more details.

AWS snowmobile is crazier than Snowball. It enables you to transfer 100 PB of data to Amazon S3. Amazon sends a truck with a container to your data center with people. They setup a switch and connect that between your data center and container. It is then driven back to Amazon where data is transferred to S3. It can optionally include security personnel, GPS tracking, alarm monitoring, 24/7 video surveillance and escort security vehicle in transit. See (Amazon Snowmobile)[https://aws.amazon.com/snowmobile/] page for details.

16 Aug 2019

S3 is probably second most frequently used AWS service after EC2. From what I have heard, It’s a big part of Solution Architect Exam, so I will to cover it in detail. S3 has a lot of features so I won’t be covering them all but will go through the important ones. For details S3 overview, A Cloud Guru has deep dive S3 course which you can refer to.

S3 comes from Simple Storage Service, that is, three S in name. It’s an object storage service. Objects are basically files and some metadata about these files. All objects get stored in Buckets. You can think of Buckets as folders in which you can organize and manage different files in S3. Bucket names need to be unique accross all of AWS, so you can’t create a bucket which was already created by someone else.

An S3 object has following content:

- Key (name of file)

- Value (data)

- Metadata (tags/labels and other data about data)

- Version ID (when we enable versioning of objects)

- Access Control Lists

- Torrent

S3 has read after write consistency for new objects. So if you put a new object in S3, you can immediately access it. For deletes and updates, it has eventual consistency so it may take a while to replicat update and delete to all Availability Zones and you may see a delay of few milliseconds or seconds before things get reflected everywhere.

Objects in S3 have 99.999999999% (11 9s) durability guarantee. So chances of having your stored objects lost are almost nil unless earth was hit by a meteor. Availability guarantee for S3 is 99.9%. That is, maximum amount of downtime for S3 is 8 hours 45 minutes 36 seconds yearly or 1 minute 26 seconds per day. Amazon claims they have designed S3 for 99.99% availability, that is 52 minutes 34 seconds yearly downtime or 9 seconds per day. While we are talking about SLAs, http://www.slatools.com/sla-uptime-calculator is good tool for calculating what SLAs percentiles mean in terms of time.

Some features of S3

S3 allows encryption, maintains compliance programs, such as PCI-DSS, HIPAA/HITECH, FedRAMP, EU Data Protection Directive, and FISMA, to help you meet regulatory requirements. It also has capability to log access to objects for audit purposes. You can replicate S3 objects accross regions for more availability. You can do S3 Batch operations to edit properties of billions of objects in bulk. S3 can also be used with Lambda so you can store stuff in S3 and serve them with Lambda Application without having to maintain additional infrastructure. You can also lock S3 objects and prevent their deletion. Multi Factor Authentication can also be used to prevent accidental deletion of S3 Objects. You may also query S3 objects using SQL via Amazon Athena. S3 Select can be used to retrieve subset of data in object rather than complete object and improve query performance.

Storage Tiers, Versioning and Lifecycles

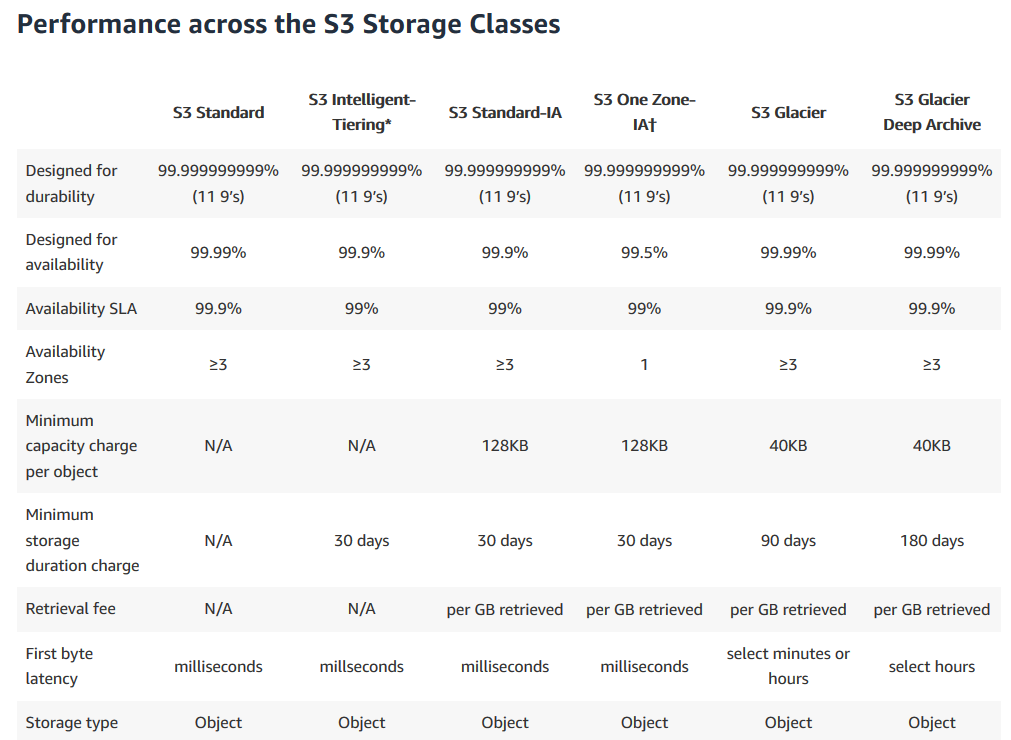

Amazon S3 offers a range of storage classes designed for different use cases. These include S3 Standard for general-purpose storage of frequently accessed data; S3 Intelligent-Tiering for data with unknown or changing access patterns; S3 Standard-Infrequent Access (S3 Standard-IA) and S3 One Zone-Infrequent Access (S3 One Zone-IA) for long-lived, but less frequently accessed data; and Amazon S3 Glacier (S3 Glacier) and Amazon S3 Glacier Deep Archive (S3 Glacier Deep Archive) for long-term archive and digital preservation. So for example, if you acess data infrequently (S3-IA) or can tolerate delays of minutes to hours for data retrieval (S3 Glacier), you may store it in different tiers to save cost. It also allows us to setup lifecycles so we can setup rules such that if data is 3 months old, we may want to move it to infrequently accessed tier. If we have compliance data such as old access logs, we may want to store it in Glacier deep archive. You can read more about these tiers at Amazon S3 Storage Classes page. I am giving a brief explanation of these tiers below.

S3-Standard

S3 standard is ideal for frequently accessed and changed data. Here are some main features of it:

- Designed for 99.999999999 percentile durability and replicates data to at least 3 availability zones.

- Can survive complete Availability Zone failures. Designed for 99.99% Availability within a given year.

- Supports SSL in transit and Encryption at rest for data

- S3 Lifecycle management can be used to migrate data to other low cost tiers

S3-IA

S3-IA is for data which is infrequently accessed but requires immediate access when required. It’s cheaper than S3-Standard but you’re charged for data retrieval. It’s ideal for storing data of stale users, backups and storage of data for disaster recovery. Here are some features:

- Same throughput and latency as S3 Standard

- Lower cost for storage

- Can survive destruction of Availability Zone

- Designed for 99.9% Availability.

- Higher retrieval cost

S3-IA-One-Zone

Unlike S3-Standard and S3-IA which store data in multiple availability zones, S3-IA-One-Zone stores data in one zone. So in event of loss of that availability zone, data will be lost. It still redundantly stores data and is 99.999999999% resilient to loss within same zone. This class is ideal for secondary backups or easily re-creatable data. It can also be used as class for storage of data which is replicated using cross region replication. Here are some features which are different from S3 standard and S3-IA:

- Designed for 99.5% availability over a given year

- Same durability as S3 standard but within single zone

- Designed for 99.5% availability.

S3 Glacier

S3 Glacier is designed for data archival. Data stored in Glacier is not immediately accessible but there are three retrieval options which range from minutes to hours. Three options for retrieval are Expedited, Standard, or Bulk retrievals. Expedited retrievals are generally available within 1-5 minutes. Standard are available between 3-5 hours and Bulk retrievals are available between 5-12 hours. S3 Restore Upgrades allow us to upgrader retrieval time of a running in progress retrieval job. So for example, if you have a Bulk Reterieval job in progress but it has become more critical to retrive data, you can upgrade job to expedited. You will be charged for both jobs in that case.

Here are key features of Glacier:

- Low-cost design is ideal for long-term archive

- Configurable retrieval times, from minutes to hours

Refer to Amazon Glacier Page for details.

S3 Glacier Deep Archive

S3 Glacier Deep Archive is for long term retention data which is accessed once or twice a year. It costs 0.00099 per GB per month (Or $1 per TB of data). It’s the lowest cost storage option on AWS. Deep Archive is up to 75% cheaper than Standard Glacier class. You can use Standard retrieval to retrieve it within 12 hours You may also use Bulk Retrieval to further reduce retrieval cost. It’s designed for 99.9% availability. When you can afford long retrieval times for data, you may use deep archive. Deep archive is designed for data stored for long period of time so minimum storage time for objects stored in deep archive is 180 days. If you delete an object before 180 days, you’re charged for storage time and pro-rated charge for remaining days.

Storage Tiers Summary

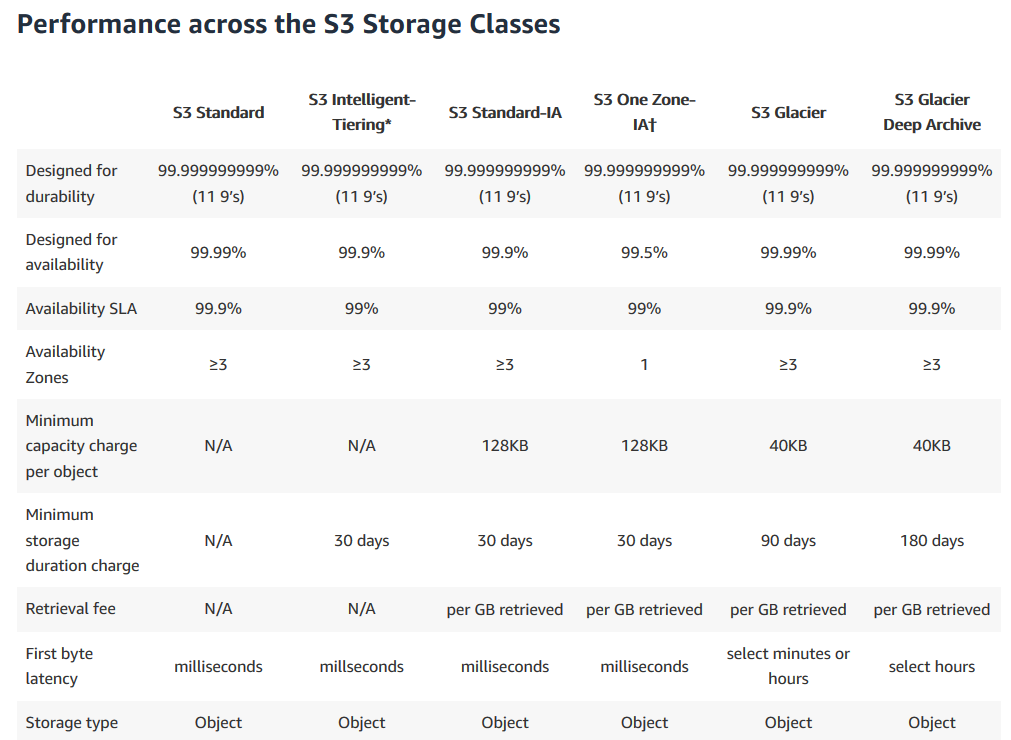

Here’s table from S3 Tiers Page, explaining difference between different tiers:

Intelligent Tiering

S3 Intelligent tiering is meant for use with data which has unknown access patterns or changing patterns. It monitors your access patterns and automatically moves data to lower cost infrequent access tiers. If object is accessed later, it moves object back to frequently accessed tier.

Lifecycle Management

Lifecycle management provides ability to define lifecycle of object with preefined policy and reduce cost. You can define these policies to automatically move data from S3 to S3-IA, IA One Zone or Glacier archives. It can also be used to delete objects after a certain time. You can also setup policy to delete multi part uploads which expires incomplete multi part uploads after certain time.

Versioning

Versioning enables us to keep multiple versions of same object on update/delete requests. With versioning enabled, an update or delete would result in new version of object rather than overwriting existing version. Versioning is important for situations where you want to avoid accidently deleting or overwriting objects. Retrieval will always serve latest object but you may pass version in query parameters to get older versions. Each version of an object is charged for, so you can use lifecycle management to delete older versions. When you delete an object with versioning enabled, it only adds a delete marker as latest version of object. To truly delete all versions of object, you have to delete all versions. Delete operations are not replicated in Cross Region Replication to avoid accidental data loss. Versioning is irreversible operation, that is, once enabled, it can’t be disabled.

Transfer Acceleration

Amazon S3 Transfer acceleration enables us to improve upload speeds between clients and S3 buckets over a long distance. Transfter acceleration enables users in distant regions to upload their data to their nearest CloudFront Edge Location, which is then routed to bucket’s region via Amazon’s Backbone Network which is generally faster than uploading directly to that region.

According to S3 FAQs, “One customer measured a 50% reduction in their average time to ingest 300 MB files from a global user base spread across the US, Europe, and parts of Asia to a bucket in the Asia Pacific (Sydney) region. Another customer observed cases where performance improved in excess of 500% for users in South East Asia and Australia uploading 250 MB files (in parts of 50MB) to an S3 bucket in the US East (N. Virginia) region.”

You can use speed comparison tool to get preview of performance improvements if you enabled transfer acceleration.

Cross Region Replication

Cross region replication allows us to replicate data from one bucket to another accross regions.

Additonal Sources

15 Aug 2019

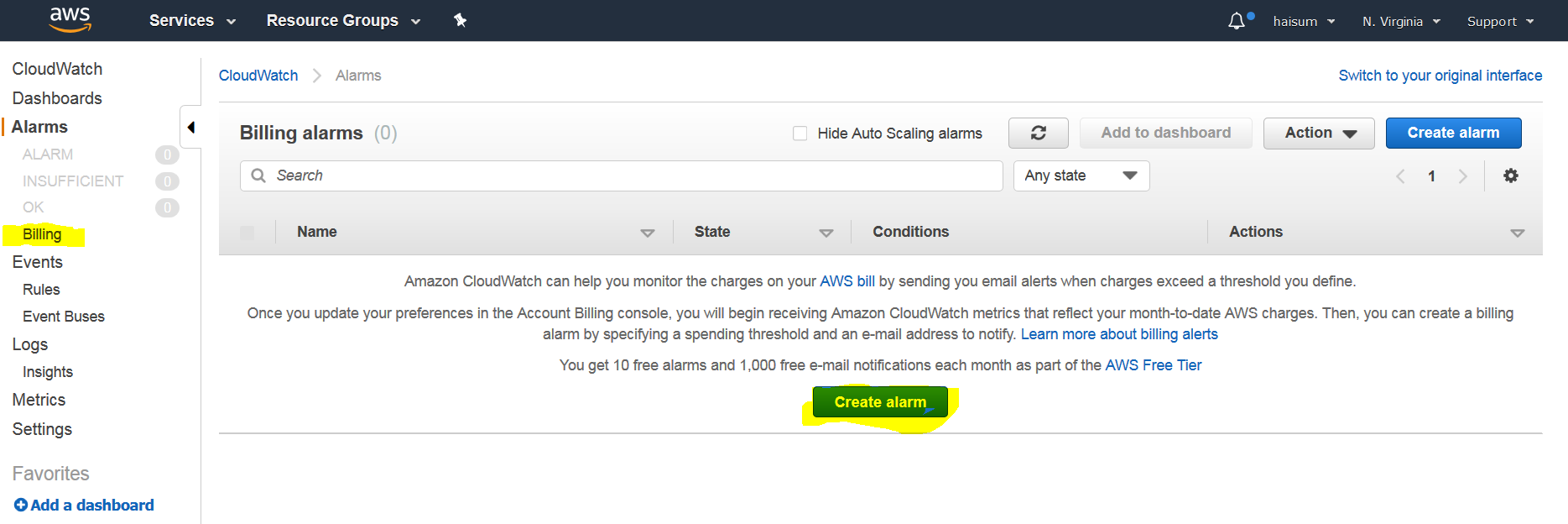

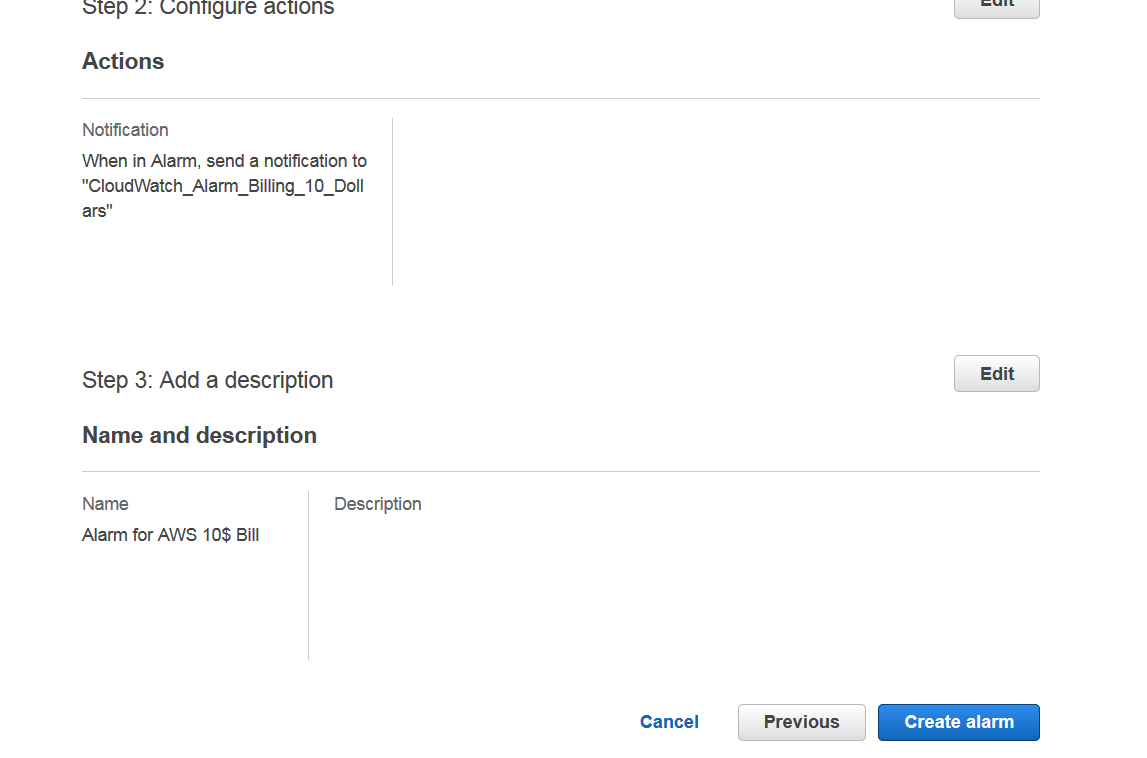

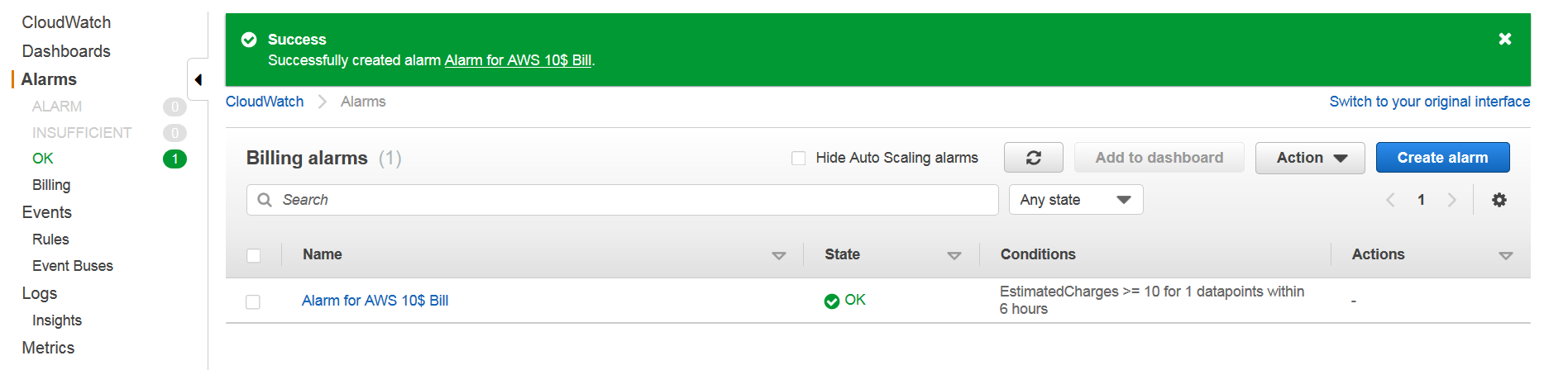

In this post I will document how to create a billing alert on AWS before we proceed to stuff that costs to use like using S3 or EC2.

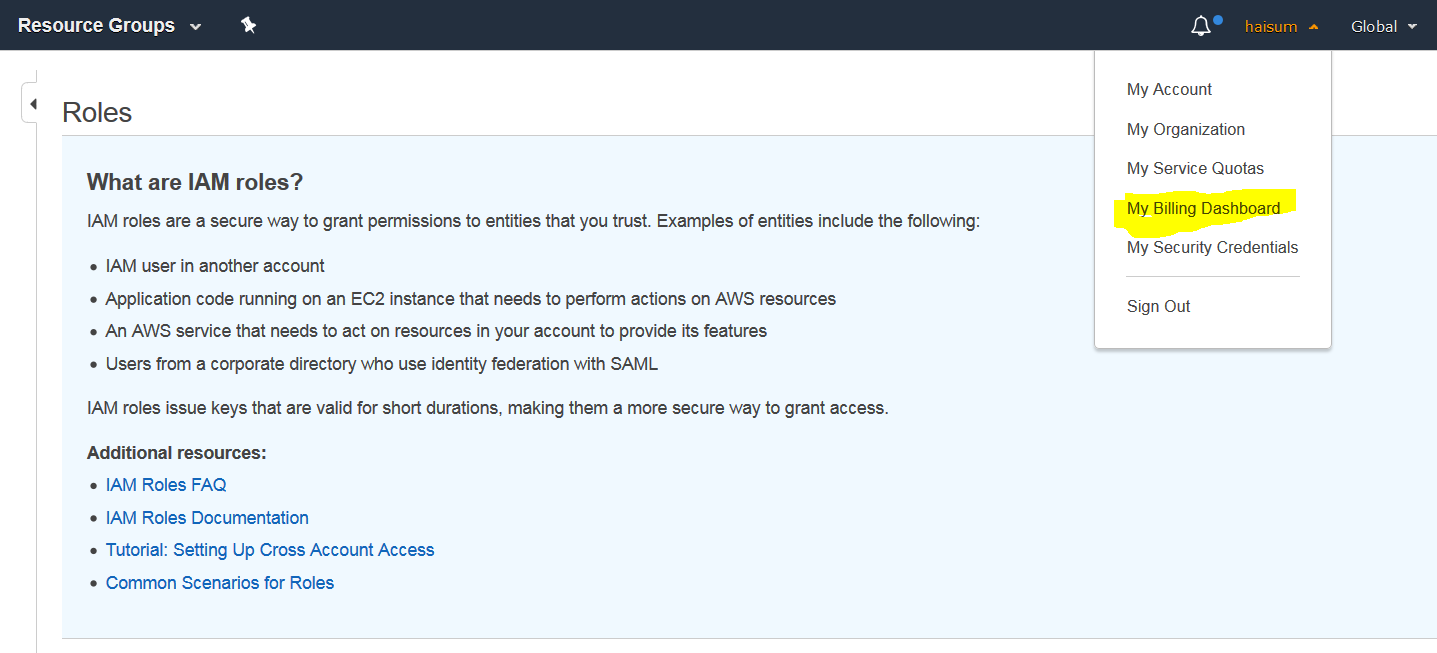

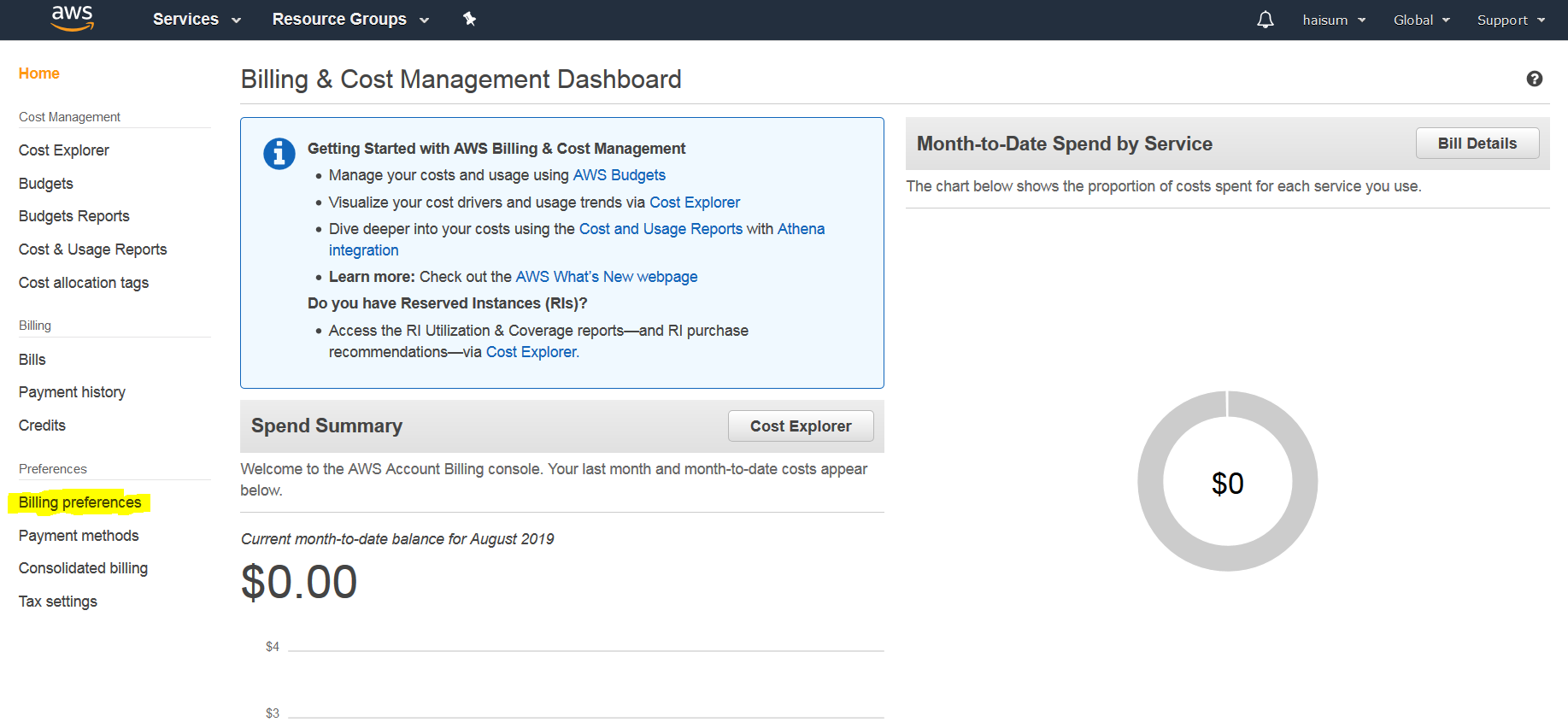

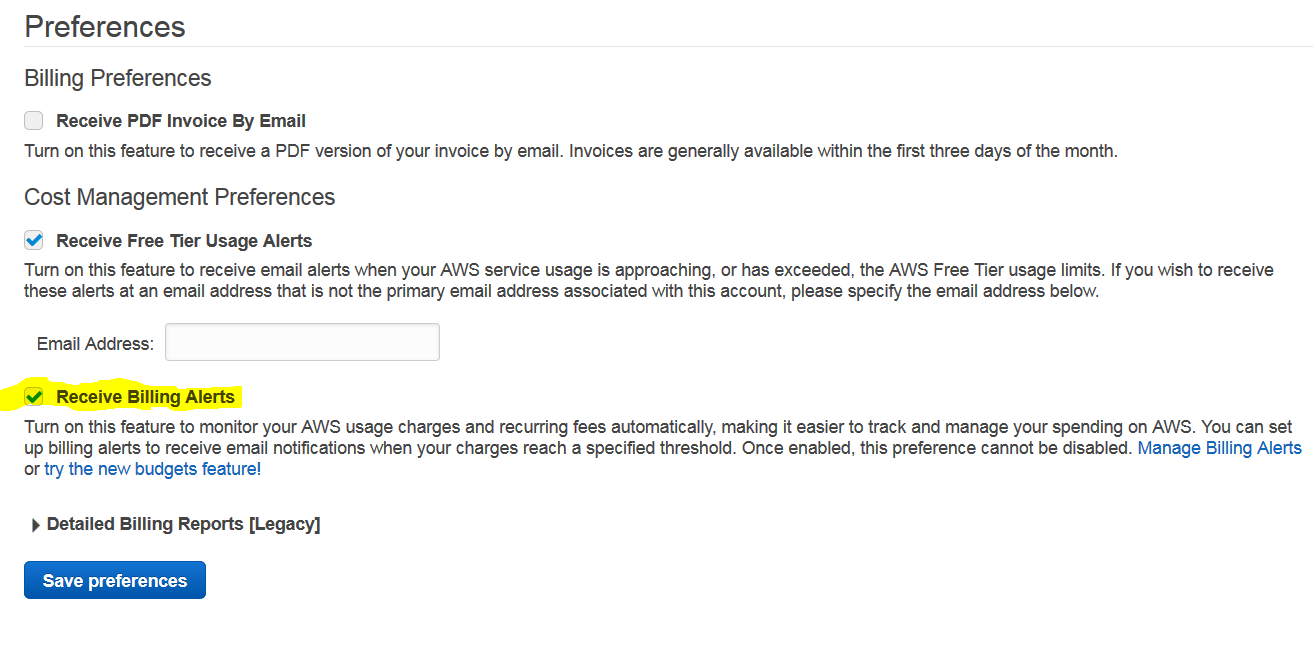

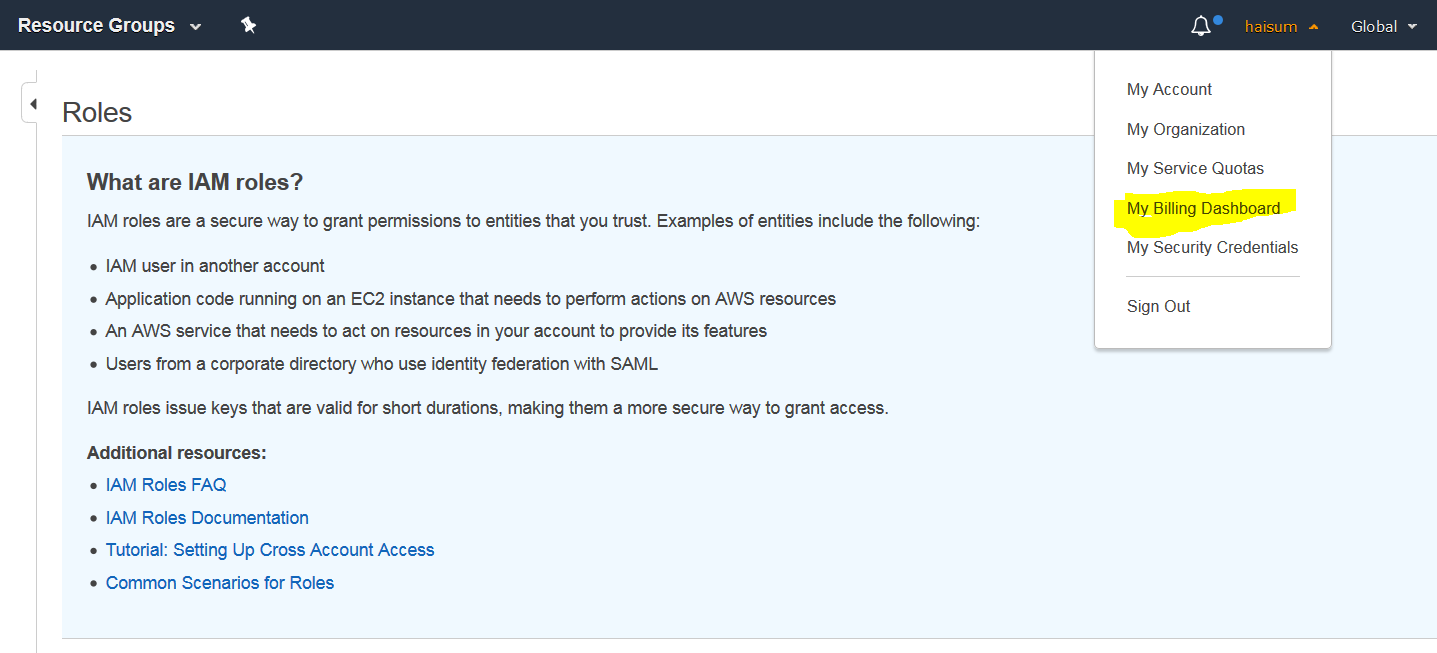

First go to your billing dashboard from top navigation.

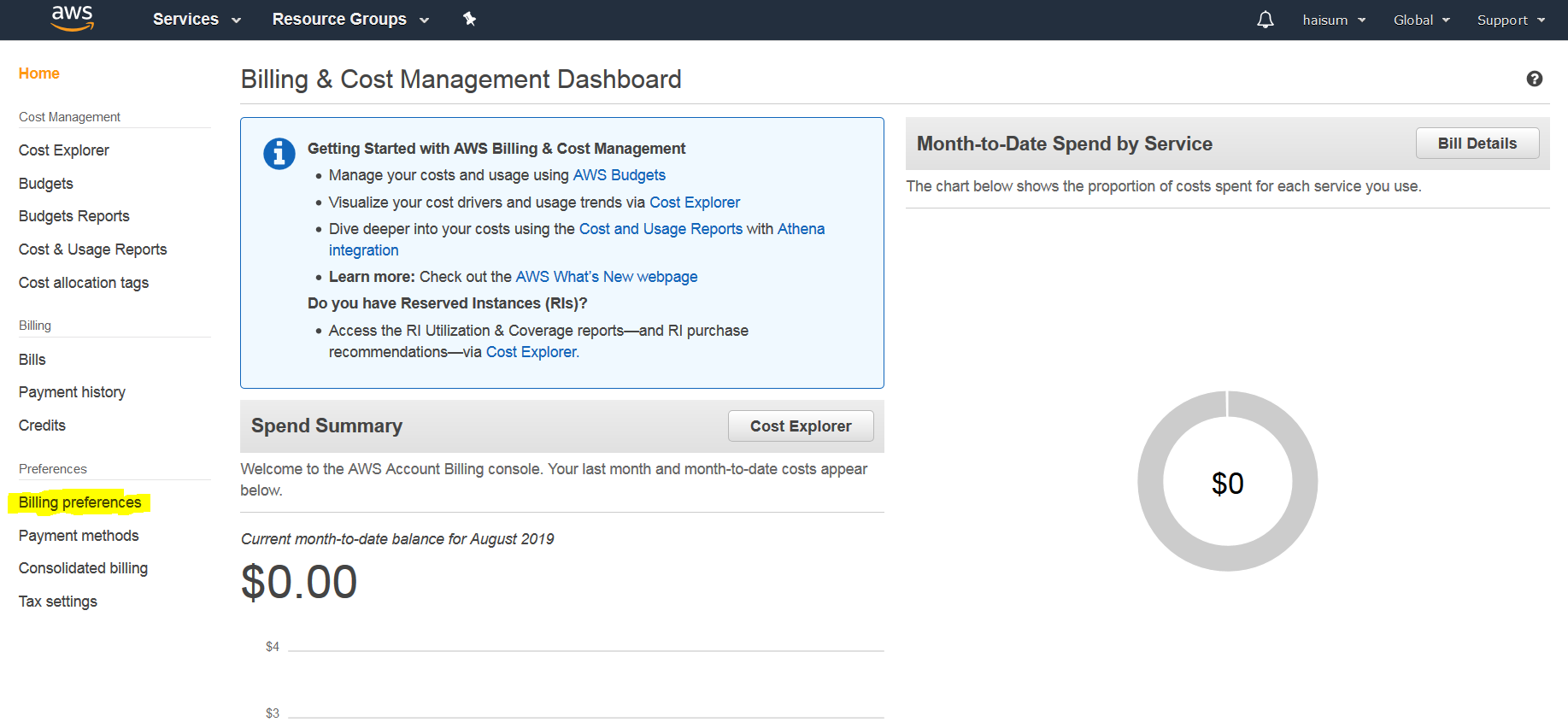

Then click billing preference in left menu.

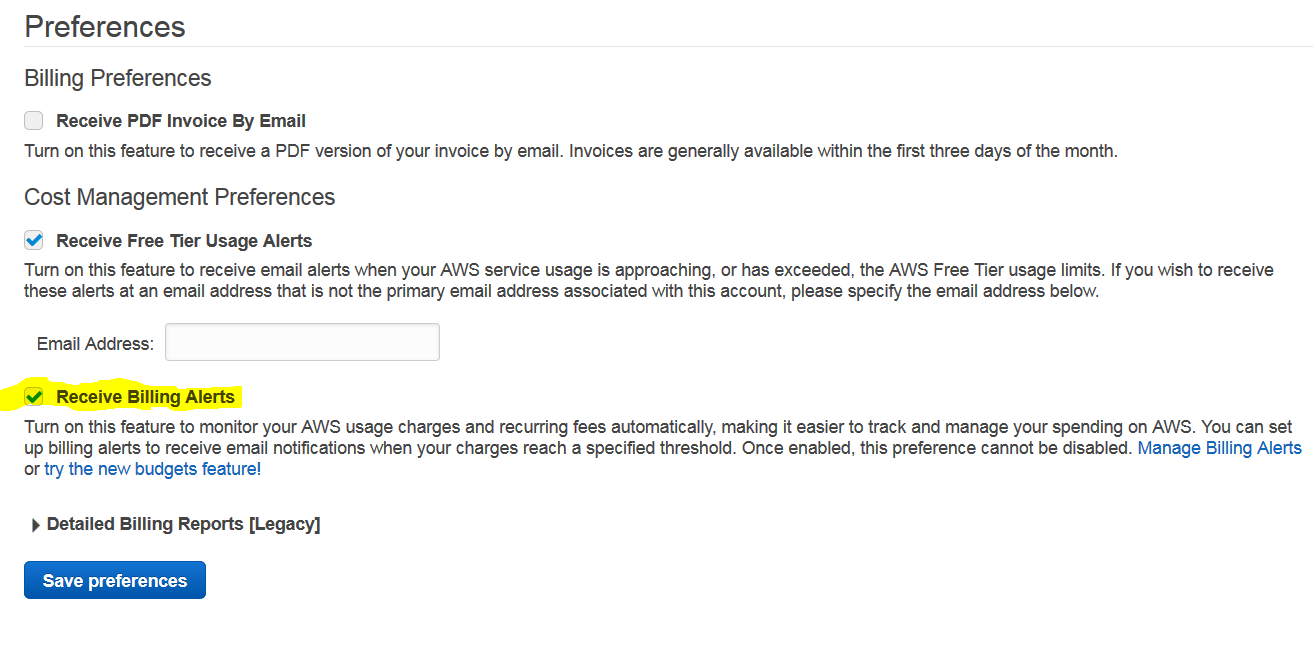

Now click Receive Billing Alerts then click Save Preferences.

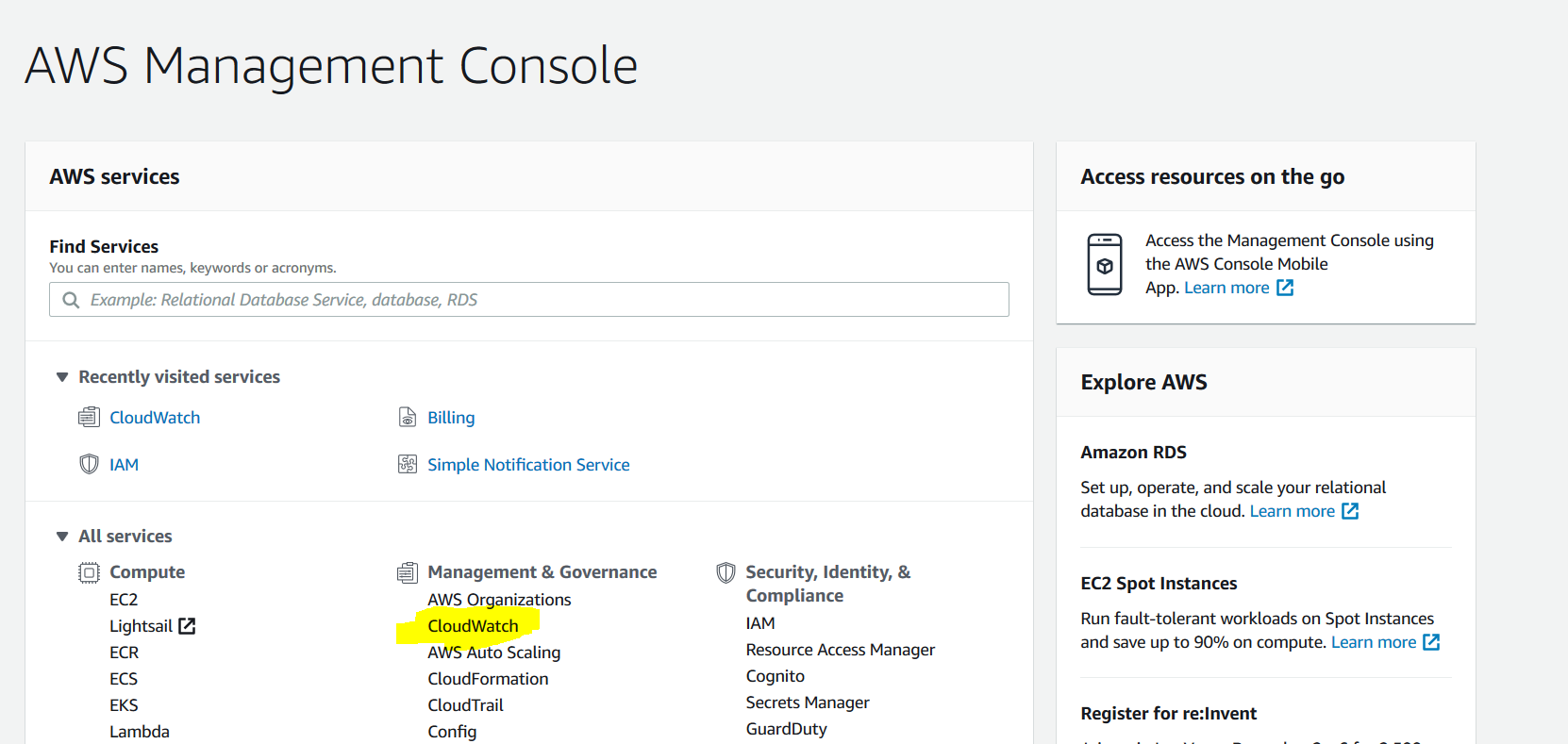

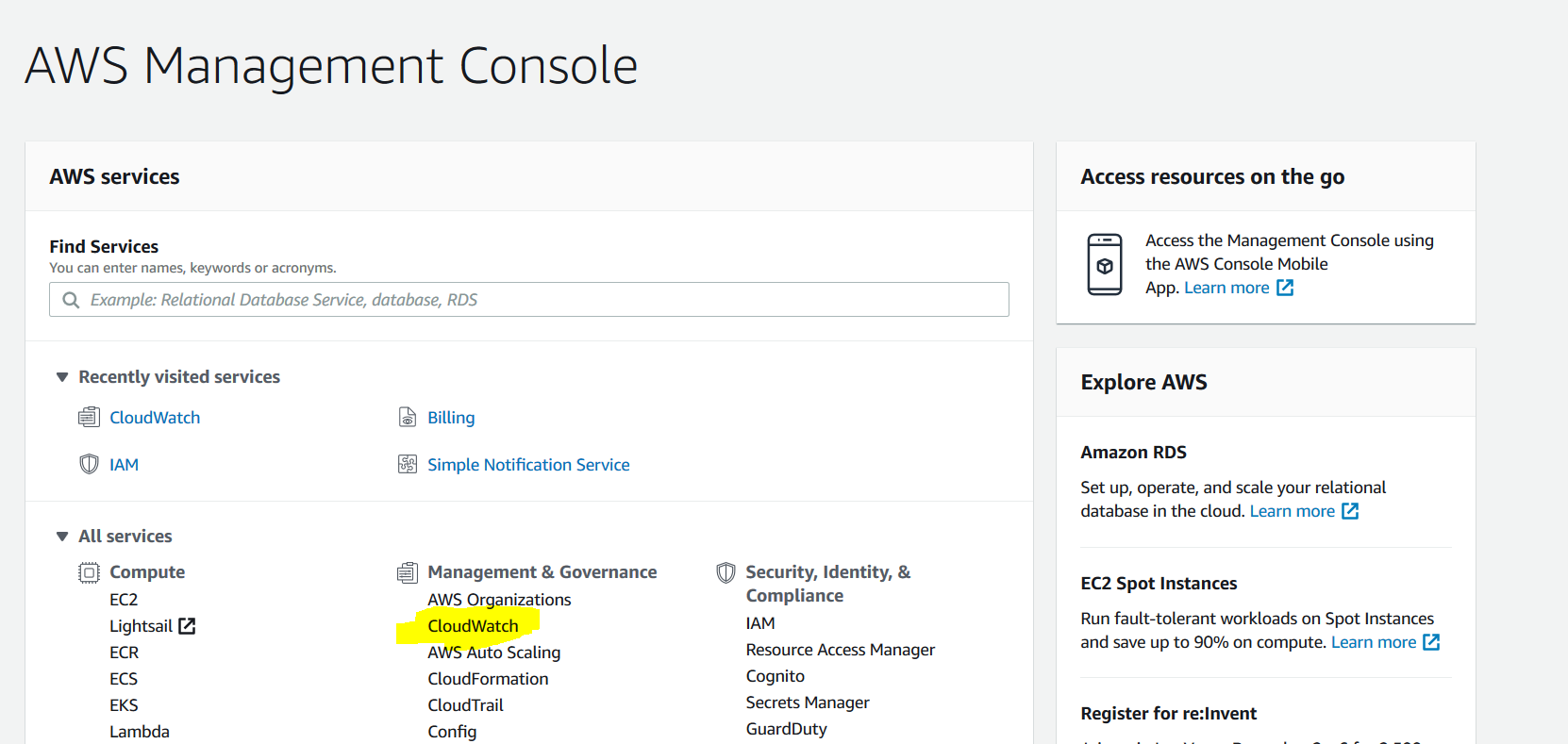

From services, select CloudWatch.

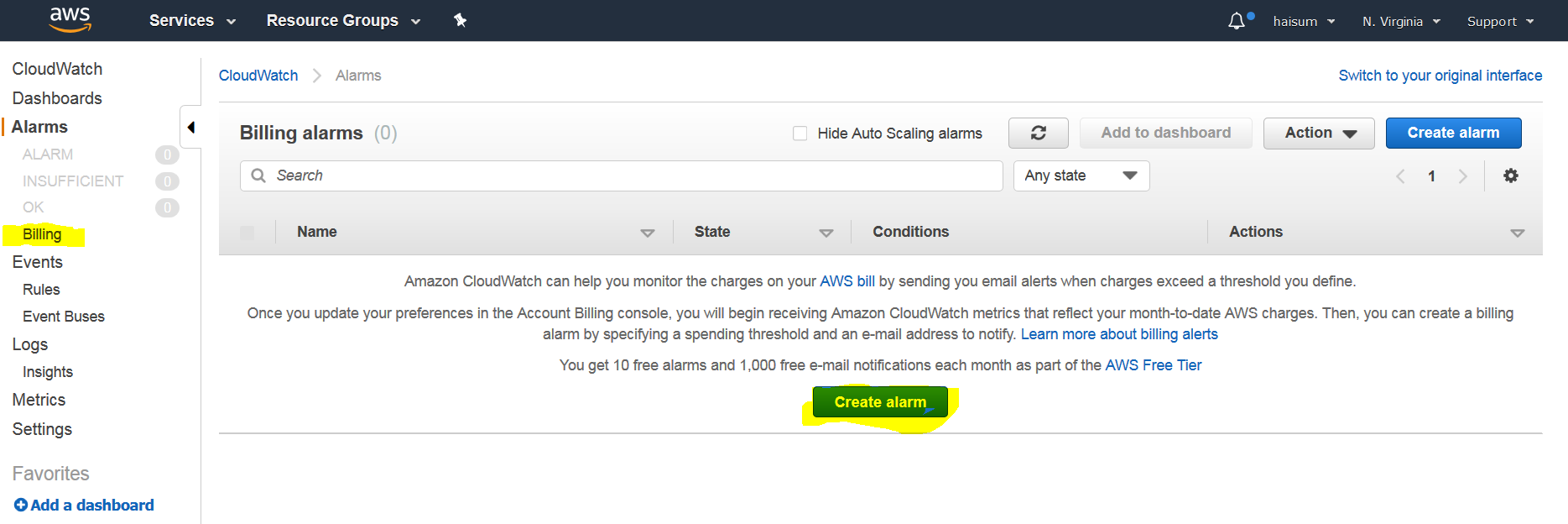

From left menu click Billing then click on create alarm.

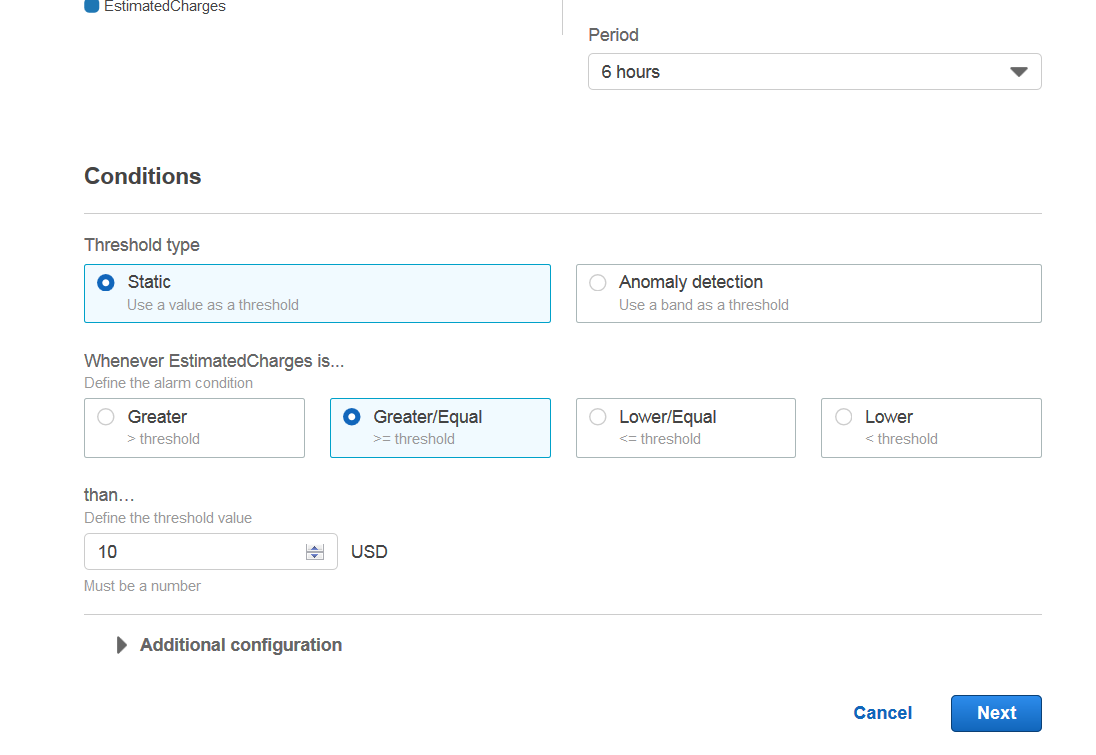

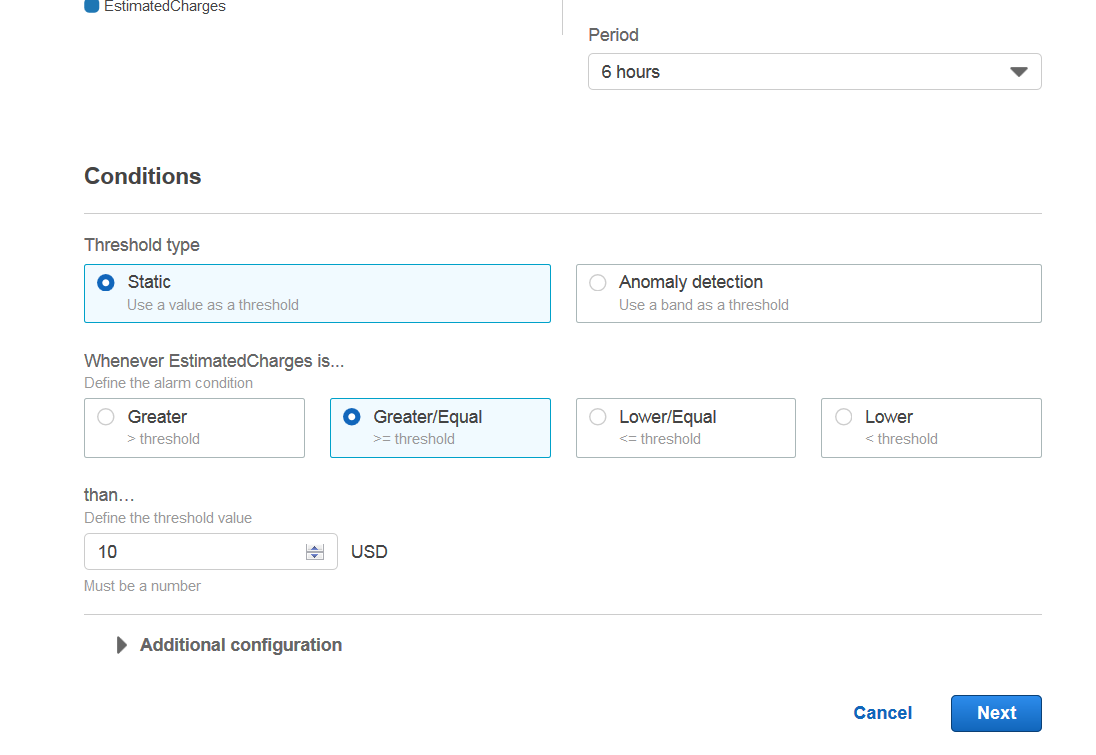

Scroll down and select preferred options then put amount. I put 10$ for me.

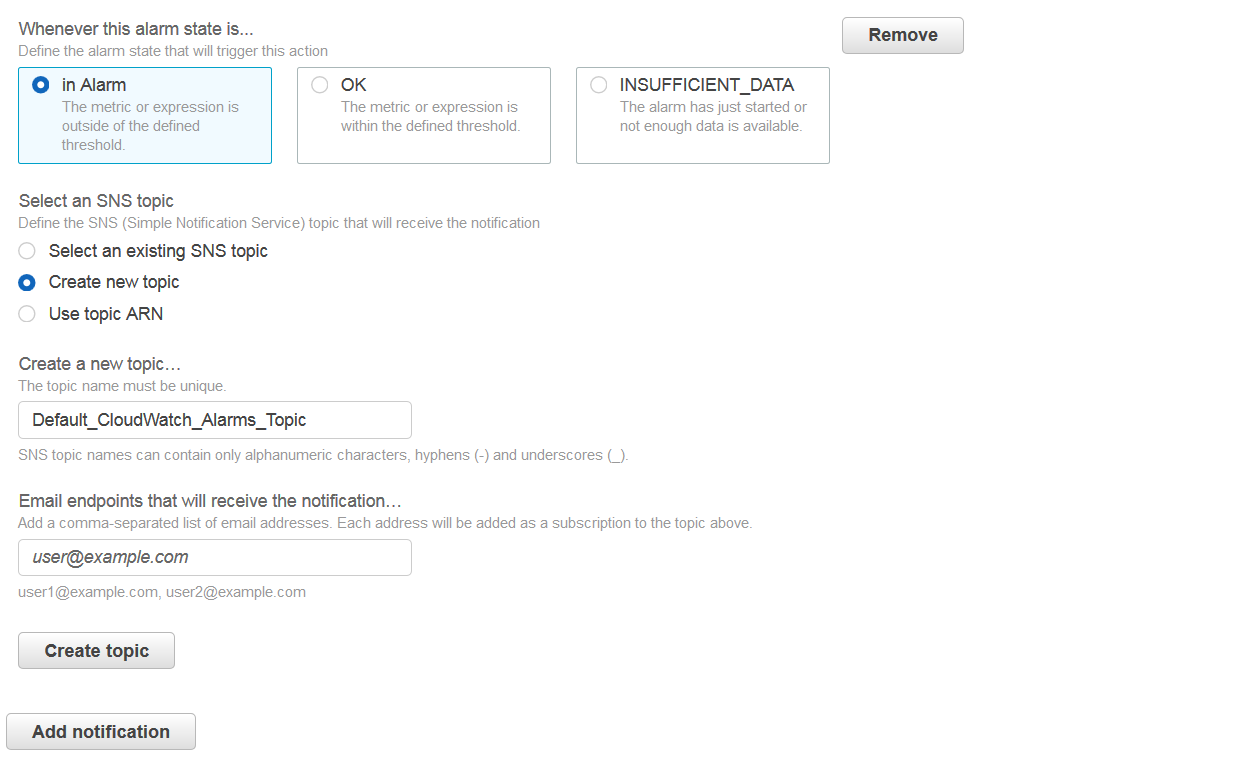

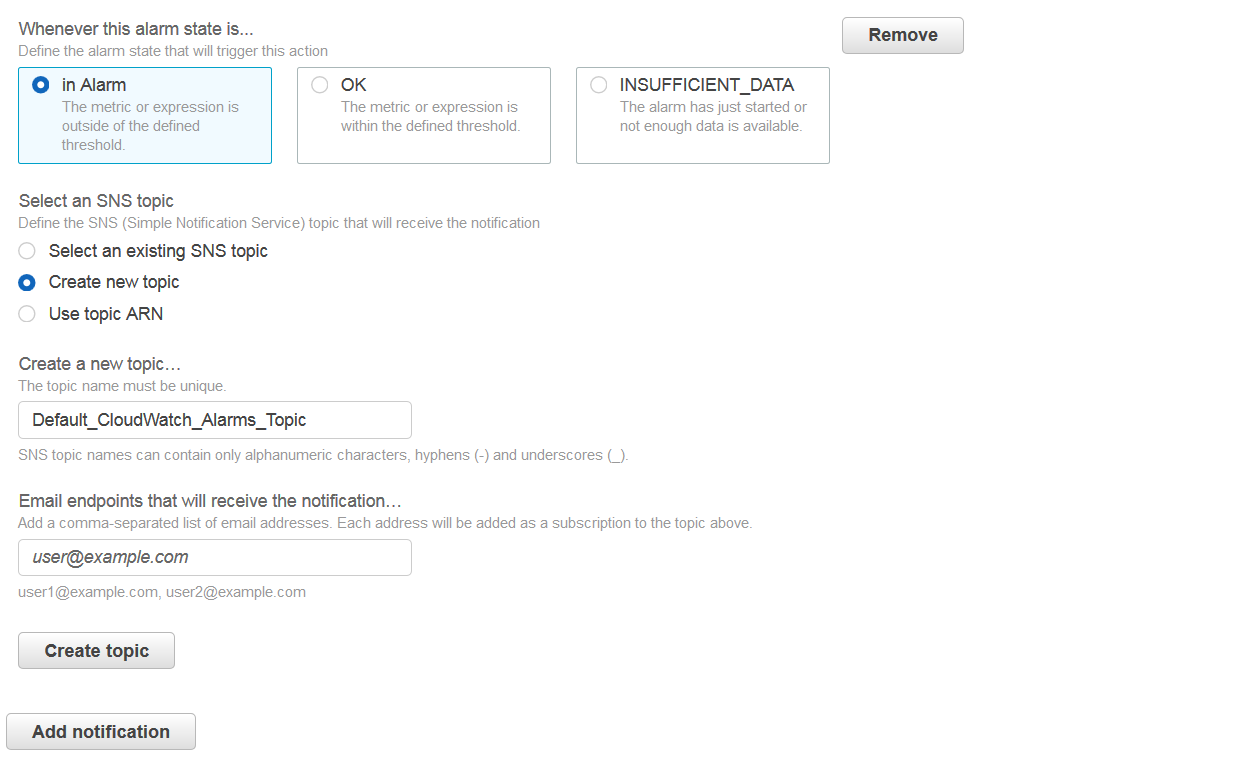

Next, create new topic and put your email address in new topic. You will need to click on a link in your email to subscribe to these notifications.

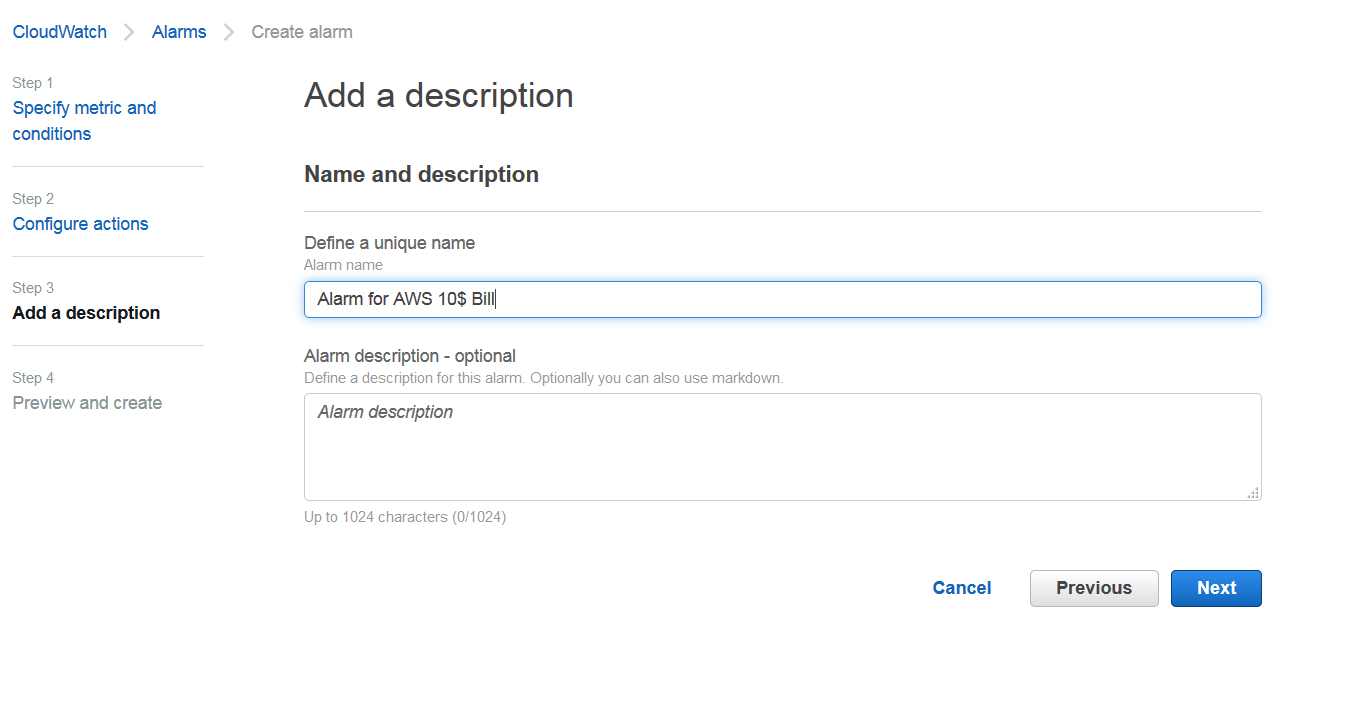

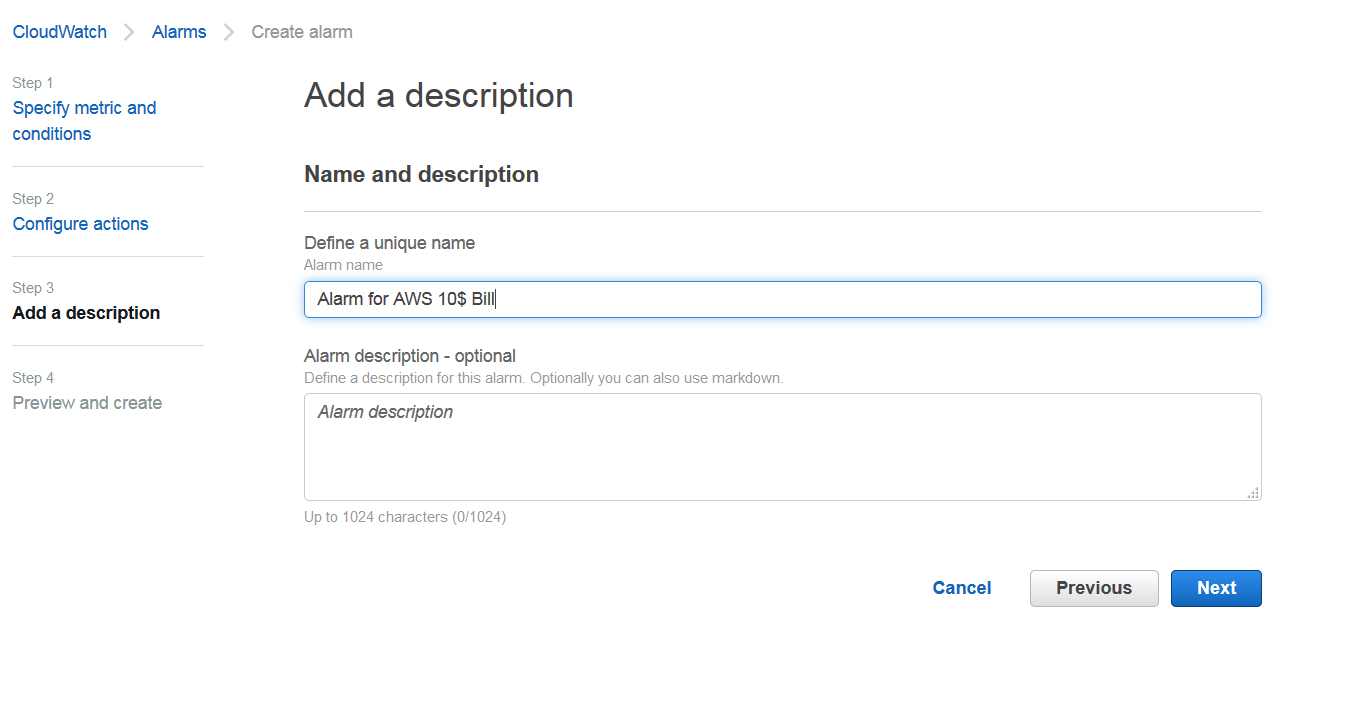

Once topic is created click next and name your alarm.

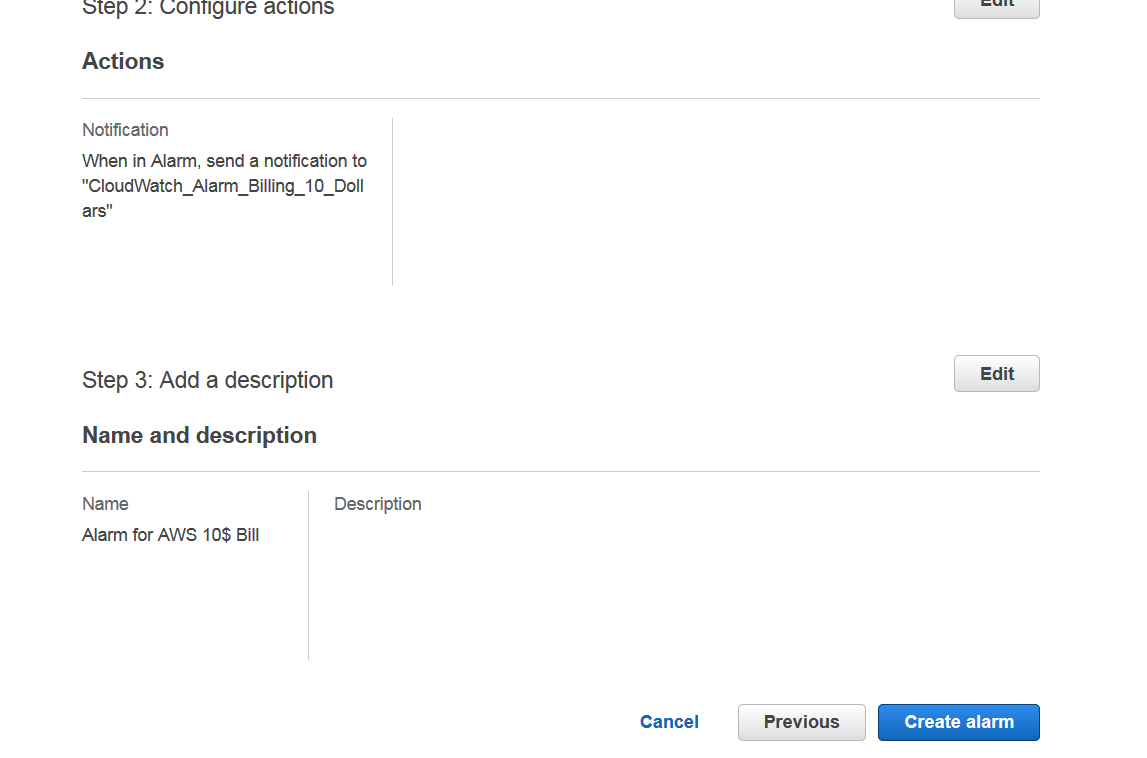

Finally verify all your information, scroll all the way down and click Create alarm.

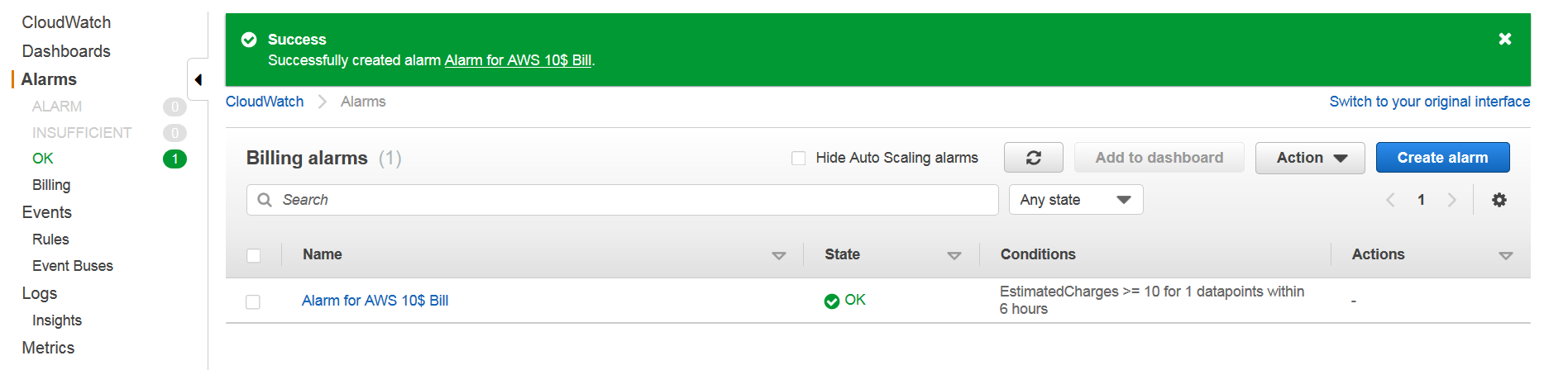

Your alarm should be active now.

15 Aug 2019

AWS has IAM service to let account holders manage access to resources by users or entities. IAM allows account owners to create and delegate creation of users, groups, roles and assign permissions using policies.

Using IAM, one can create users and give login access to shared AWS console. Multiple users can be organized in groups and groups can have permissions assigned to them by using policies. Permissions allow us to manage who can access what. In addition, IAM can be configured to enable Identity Federation, that is, users can login using corporate Active Directory, Facebook, Google, LinkedIn or any Open ID supported accounts. We can also enable Multi factor authentication, setup password rotation policies and manage access keys/passwords of users.

IAM also allows temporary access for services/users/devices when required. IAM is also essential if you want one of your services to access another service within AWS. So for example, if you want your EC2 instance to access S3, you will need to use IAM to create a role with permission to access S3 and attach that to EC2 instance.

We explore Users/Groups/Roles and Policies in detail below:

User

A user is unique identity within an account. It can send requests to services such as EC2 and S3, use AWS console on behalf of account owner, and also access resources under different accounts on which account owner has access. All resources created and used by a user are paid for by account owner.

A user can have password, acccess keys, X.509 certificate, SSH Key and MFA for authentication. An employer can decide what makes more sense for user to have and may provide any or all of these credentials to them. Account owner can manage user creation/management himself or he can delegate user management to another user.

Users are global entites. You can not restrict users to global or define users which are region specific. At time of writing, you can not set quotas for individual users so if your entire account has limit of 20 EC2 instances, then a single user can use them up.

SSH Keys of users can only be used to access Code Commit repositories. A user’s SSH key can not be used to login into EC2 instances. EC2 instance SSH keys need to be shared among users.

Groups

Groups are created to manage users with similar access rights. You can add or remove user from group. A user can belong to multiple groups but a group can not belong to another group. Groups can be granted permissions by using Access Control Policies.

Roles

According to AWS IAM Faqs, Role is an IAM entity with a set of permissions assigned to it. Roles are not associated with individual groups or users, they are assumed by trusted entities such as users, applications or services such as EC2. Roles are confused with groups but they’re different. Roles are assumed by entities such as Users, EC2, applications and services to perform certain actions. Roles are really important for EC2 instances. They allow services running in EC2 to access other services in AWS such as RDS and S3. Roles also allow rotation of credentials on EC2 instances. For more details on roles please read roles section in IAM FAQs page.

Permissions

Permissions are managed by Access control policies. ACLs may be attached to user, group or role. By default groups and roles have no permissions. Users with privilleges can grant desired permissions to them.

Managed policies are resources which can be created and managed independent of groups and roles. AWS has set of managed policies. Customers can also create their own managed policies by either using visual editor or using JSON via cli or API calls.

You can attach single policy to multiple groups and roles. When you change that policy it takes immediate effect on all users and roles it’s associated with.

So what’s difference between assigning permissions to groups and managed policies? Quoting from AWS FAQs:

“Use IAM groups to collect IAM users and define common permissions for those users. Use managed policies to share permissions across IAM users, groups, and roles. For example, if you want a group of users to be able to launch an Amazon EC2 instance, and you also want the role on that instance to have the same permissions as the users in the group, you can create a managed policy and assign it to the group of users and the role on the Amazon EC2 instance.”

Policy Simulator

You may use Policy Simulator to test and troubleshoot your IAM settings.

Temporary Credentials

Temporary credentials consist of secret key, access key and a token which are only valid for a limited amount of time. They allow us to provide credentials which expire after a certain time. This allows improved security specially when calling APIs via mobile devices which can be lost. IAM users can request temporary security credentials for their own use by calling the AWS STS GetSessionToken API. The default expiration for these temporary credentials is 12 hours; the minimum is 15 minutes, and the maximum is 36 hours.

Faq page

FAQ page for IAM is very detailed and contains answers to many questions so it’s good to overview it. It also contains links to other useful resources so do read it if you have time. Link: https://aws.amazon.com/iam/faqs/

How to do it

I covered a lot of theory above. Now let’s do it practically.

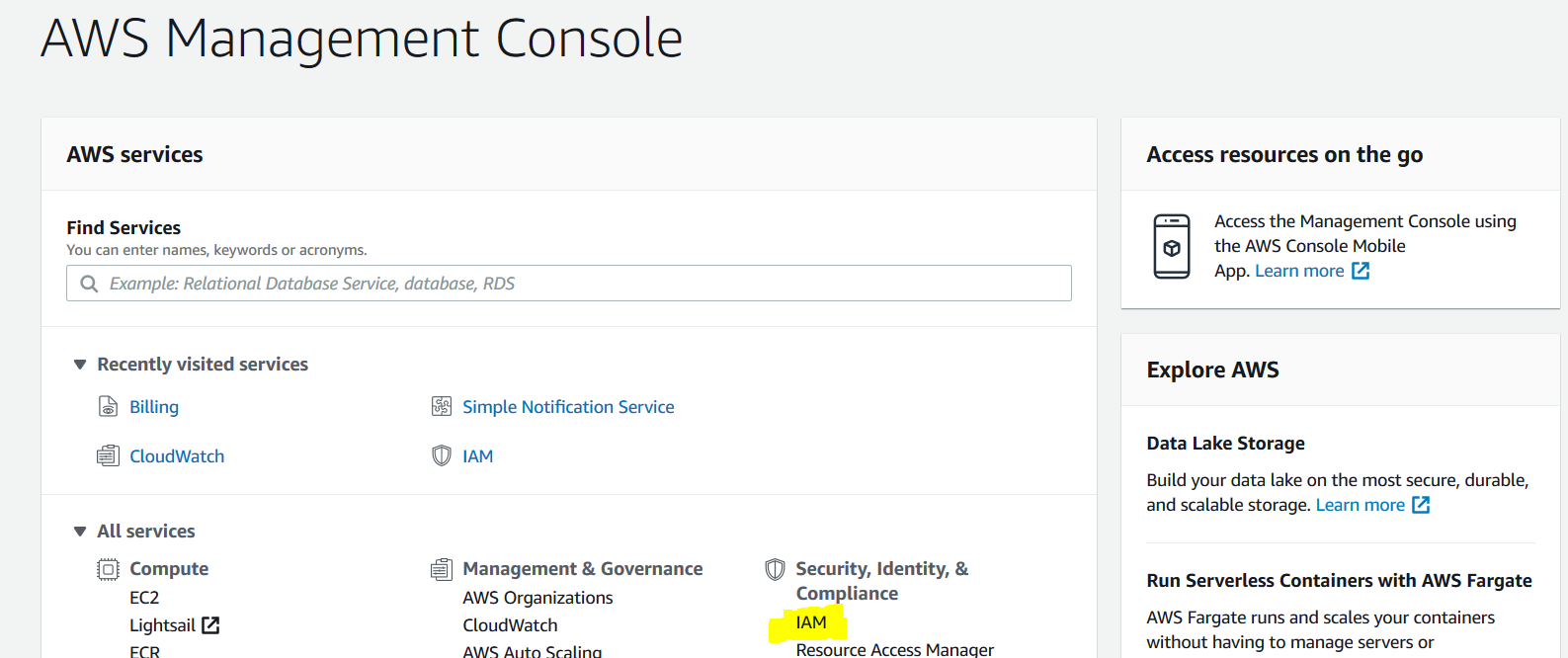

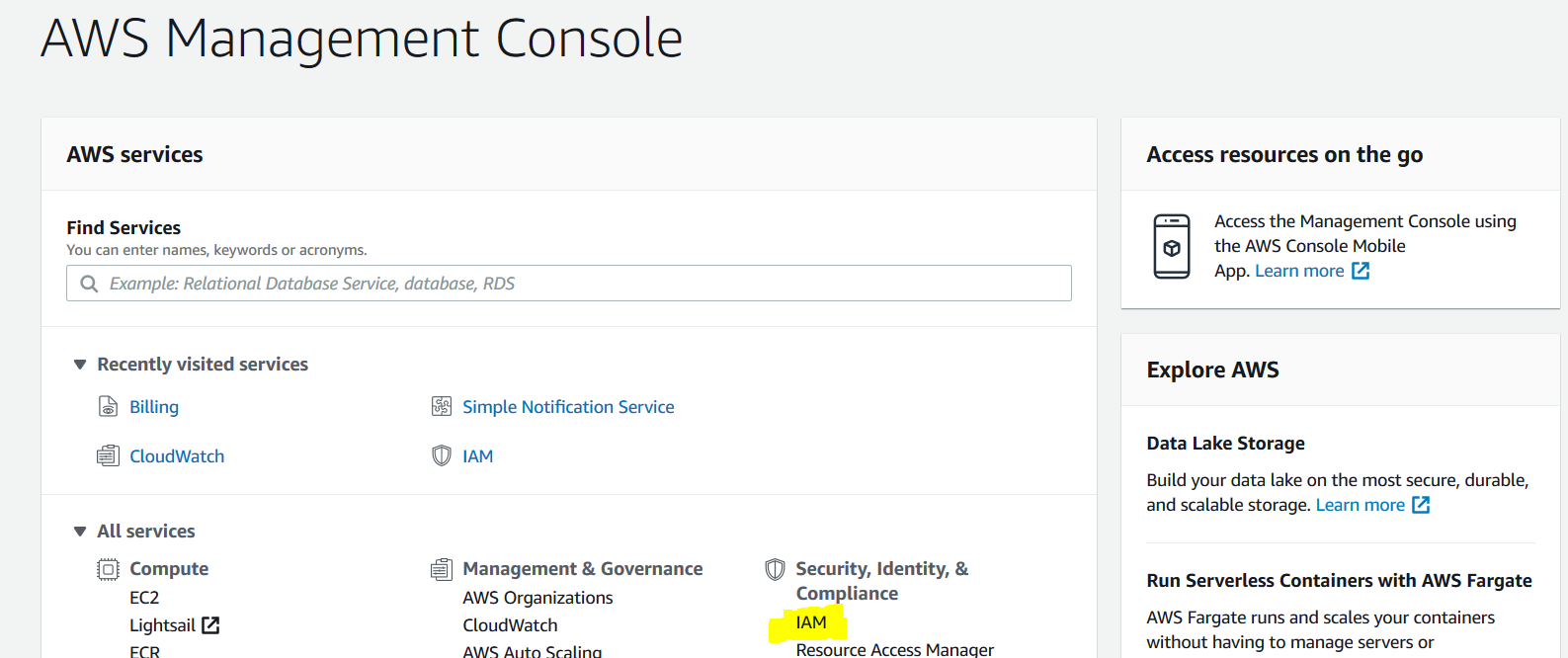

Login into AWS console and you will see a list of services. Select IAM under Security, Identity and Compliance.

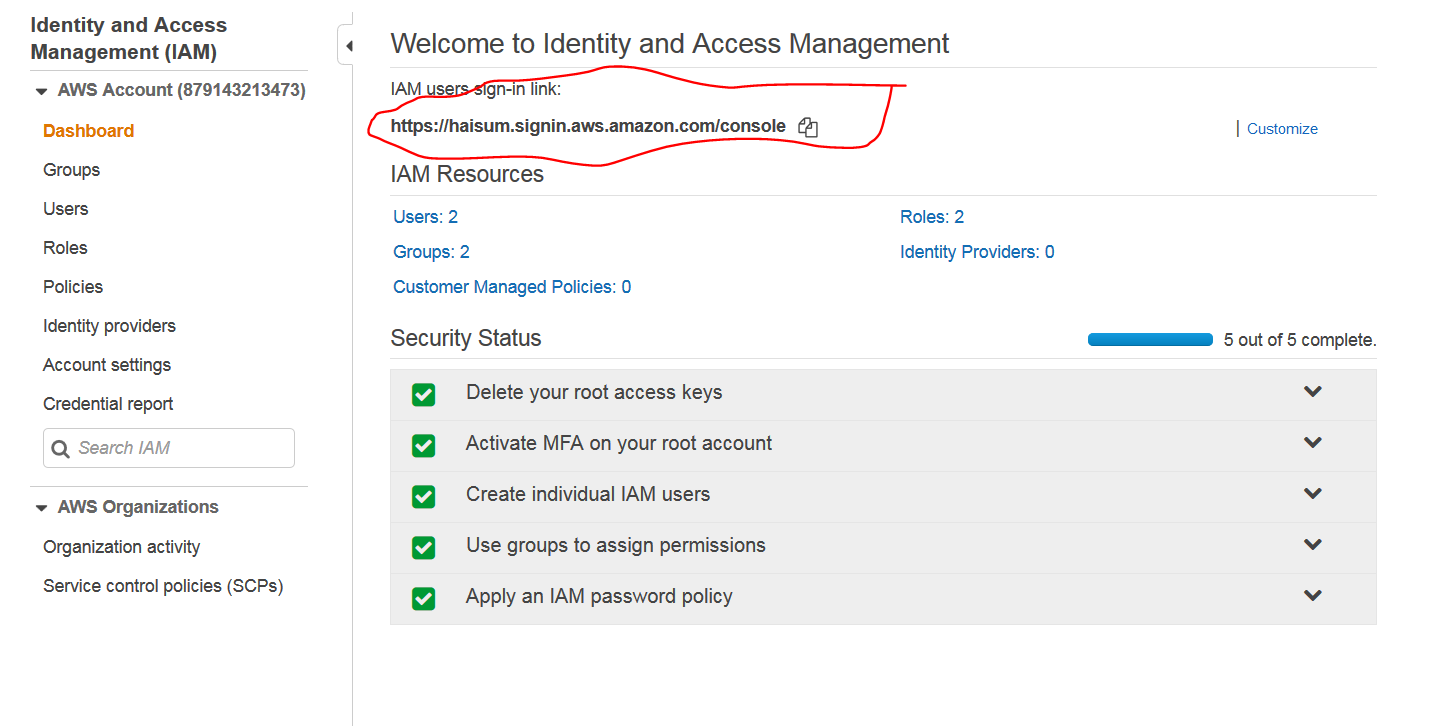

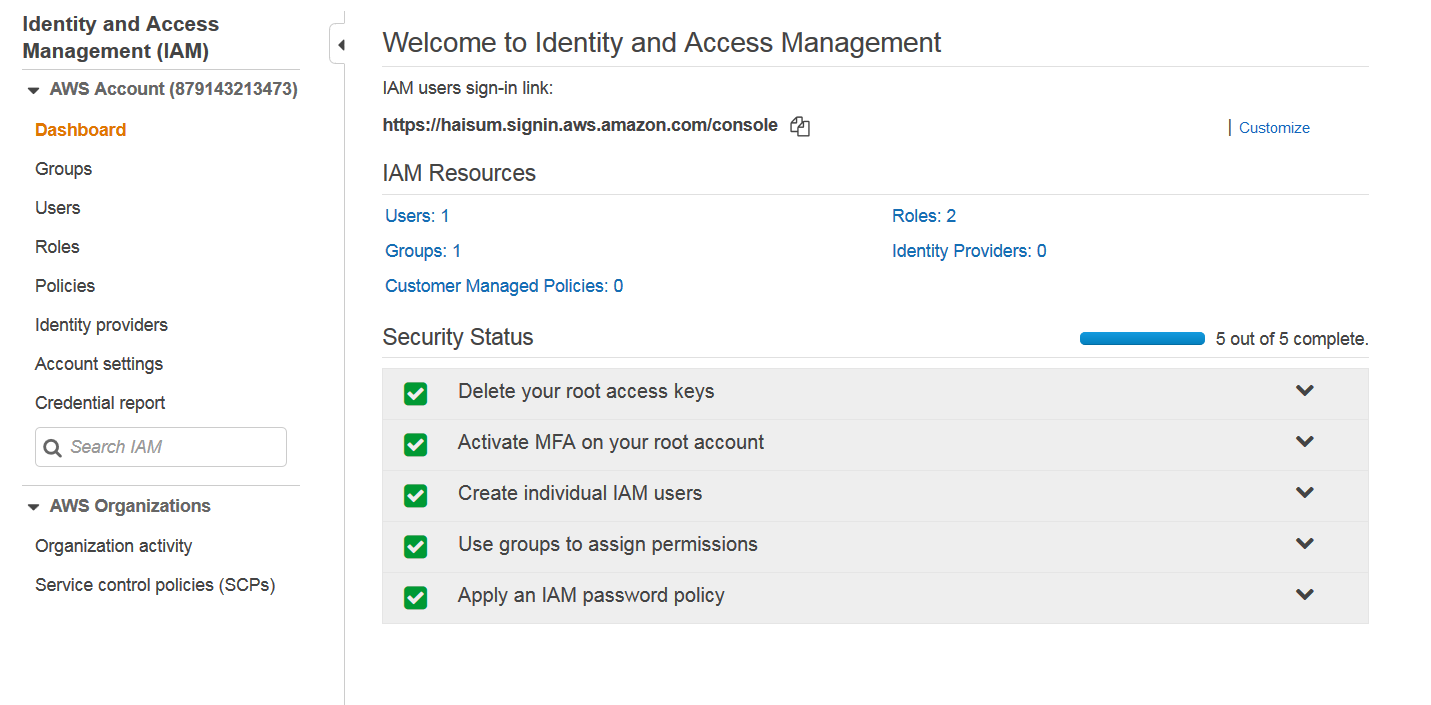

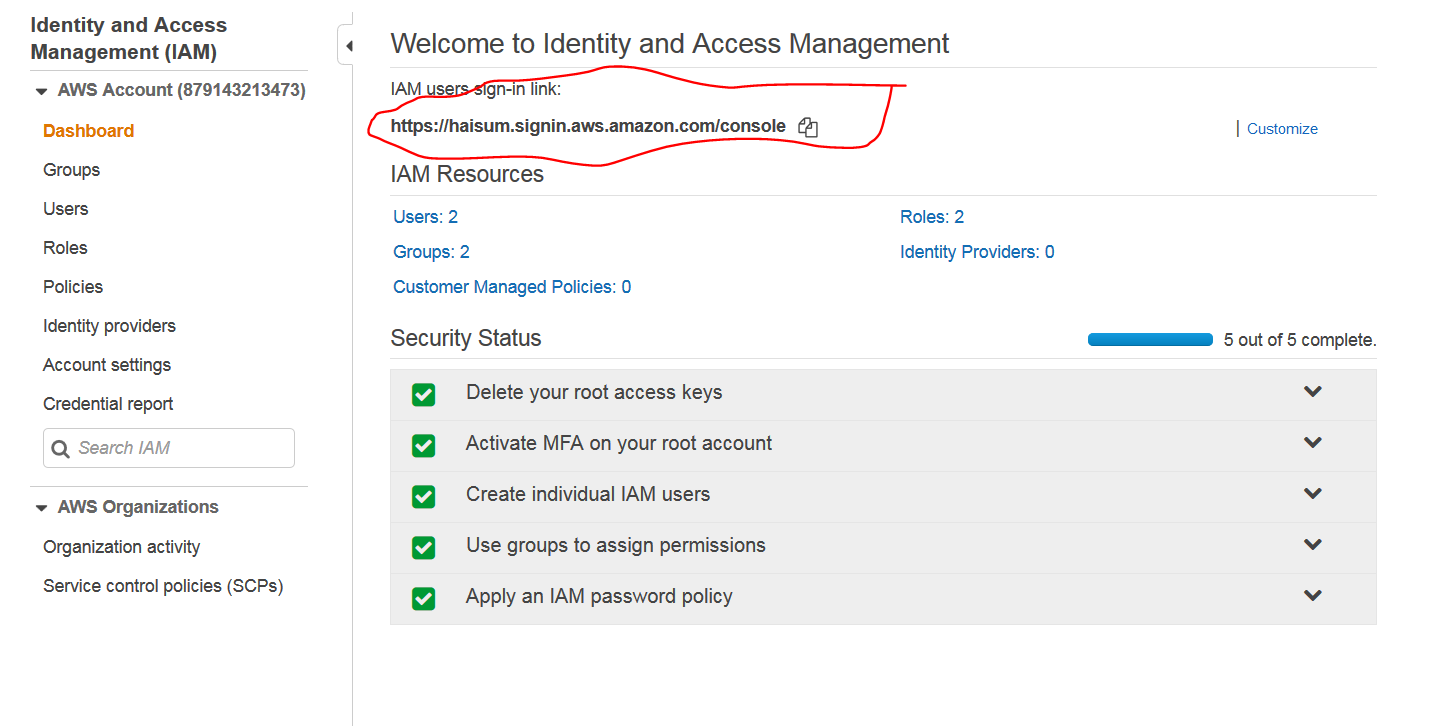

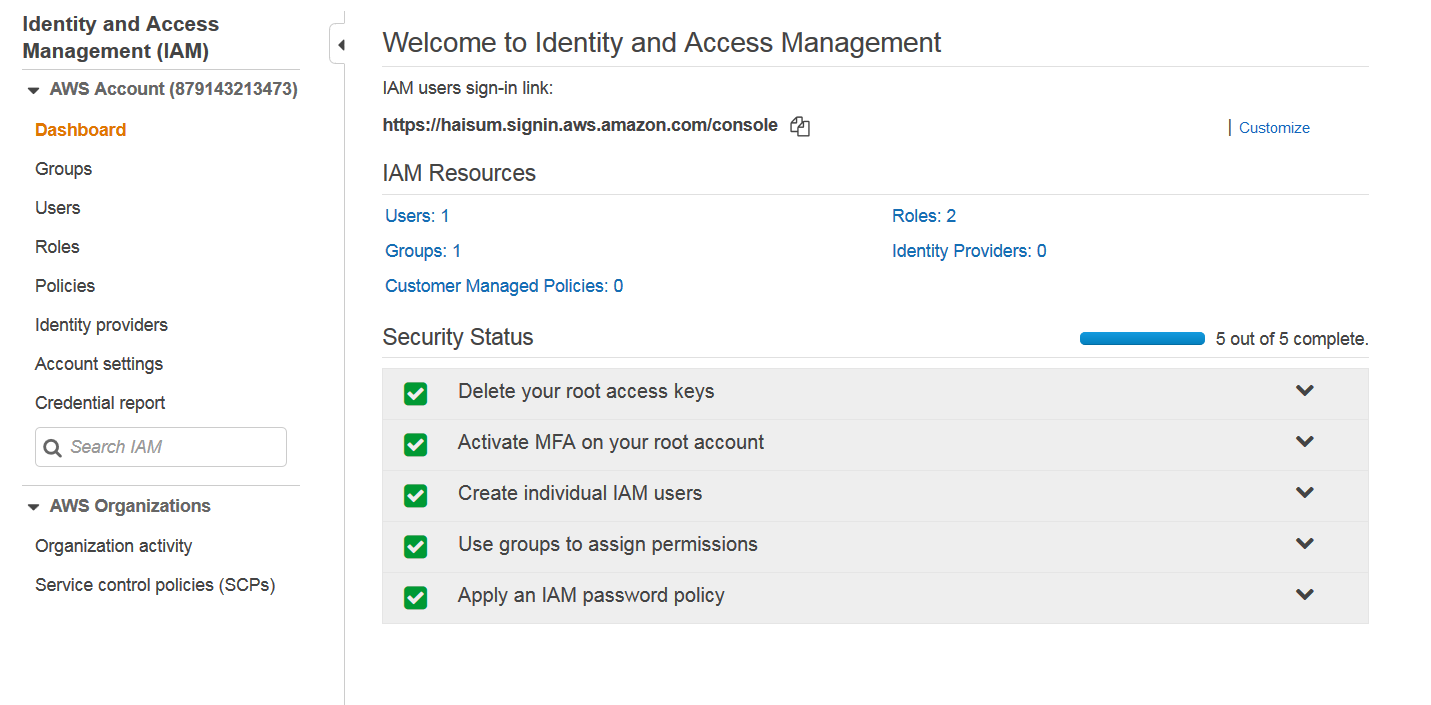

You will be taken to IAM dashboard. Initially 4 out of 5 in Security Status will be red marks. You can go into each and do suggested to make them green.

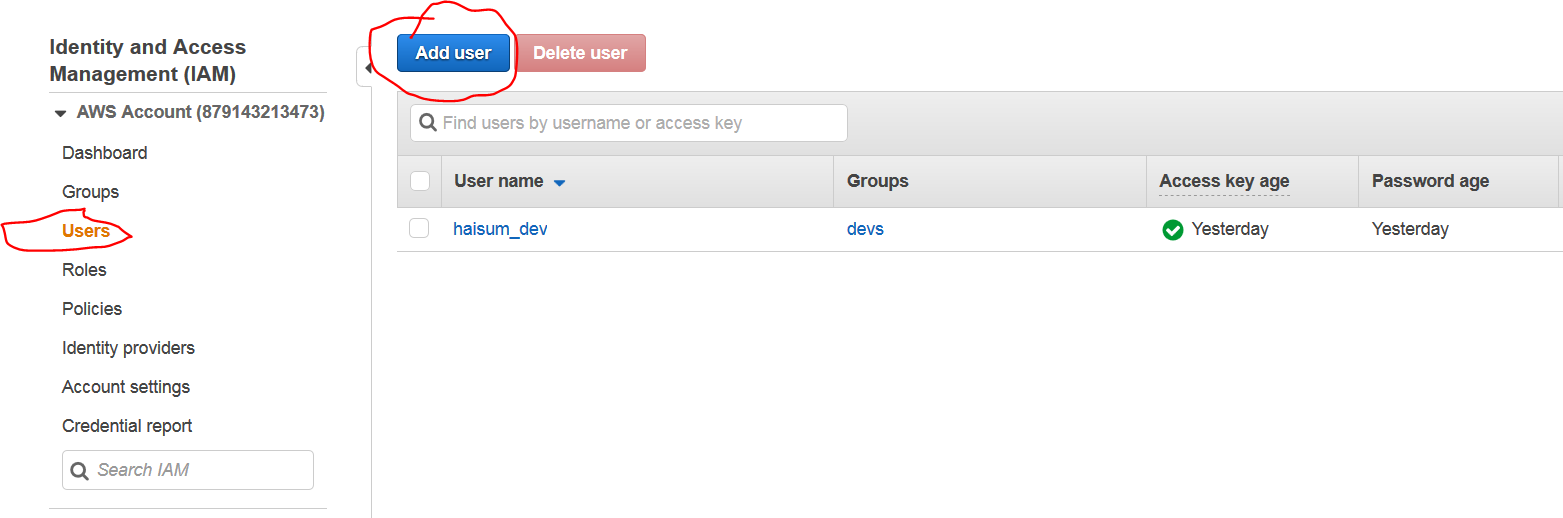

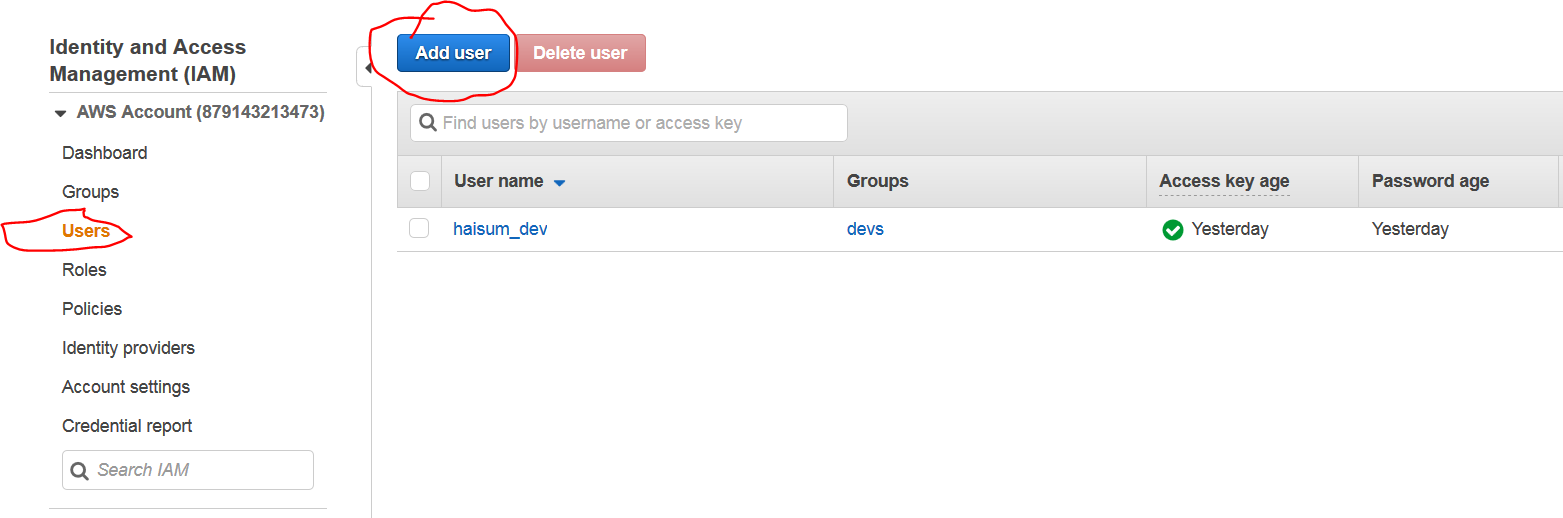

We will explore how to create users. In users tab, select add user.

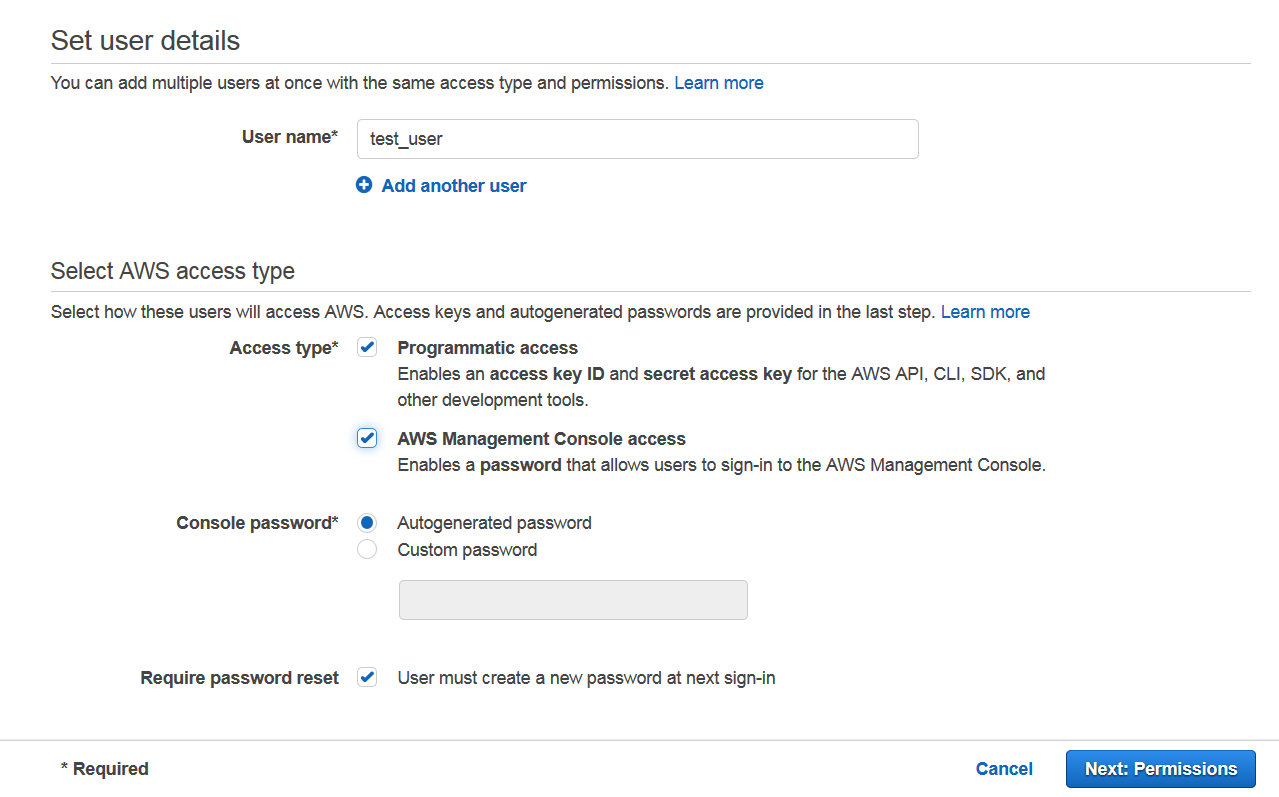

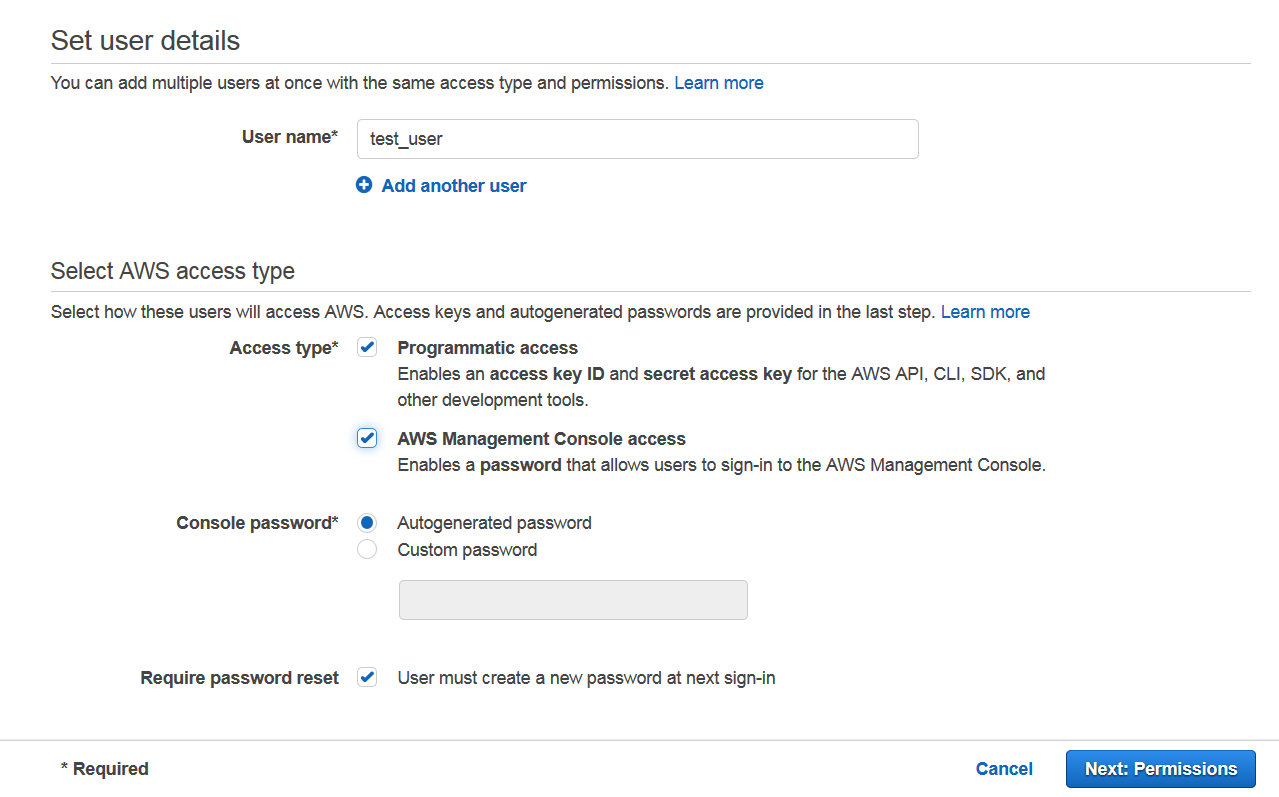

Select a name for user. You may want to give either or both of console and programmatic access to a user depending on use case. You can also auto generate or manually create a password for user. AWS can also force user to do a password reset on next login.

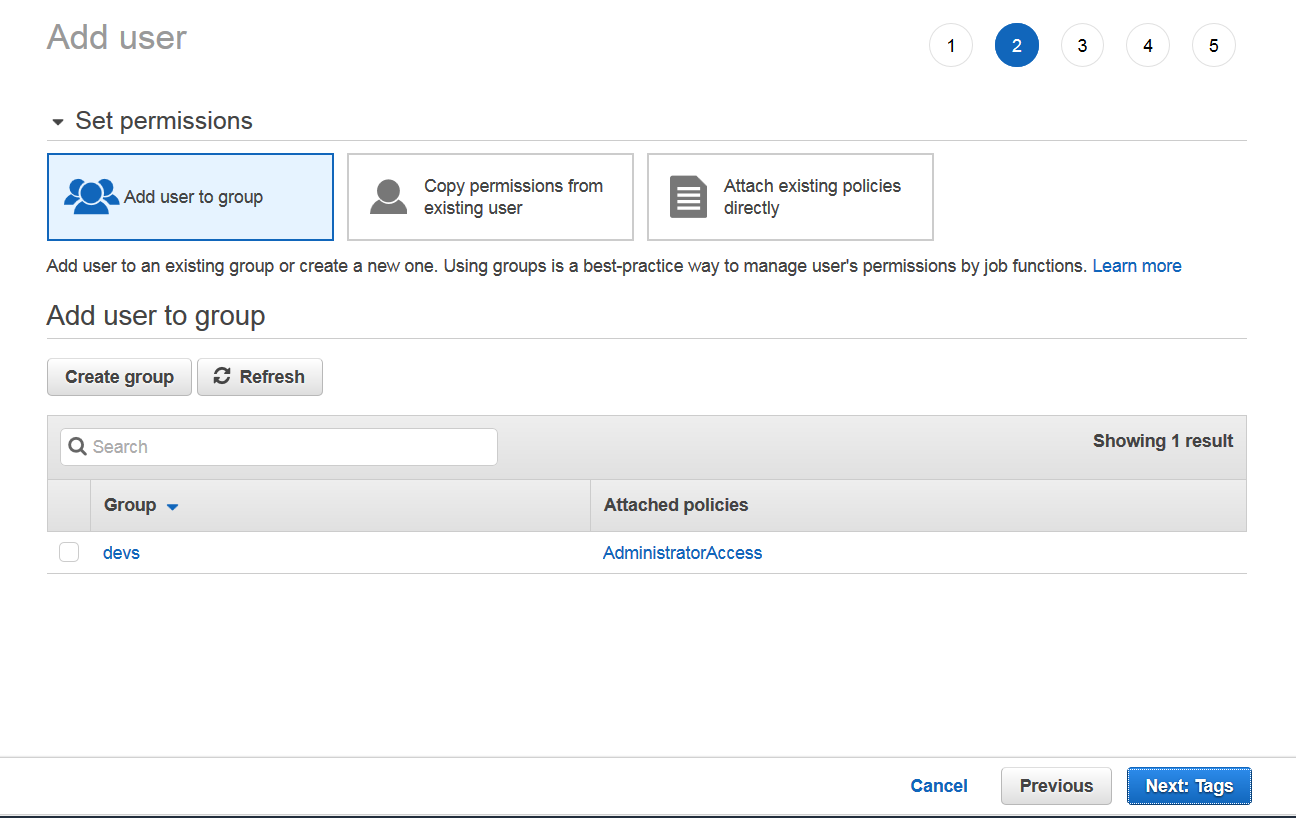

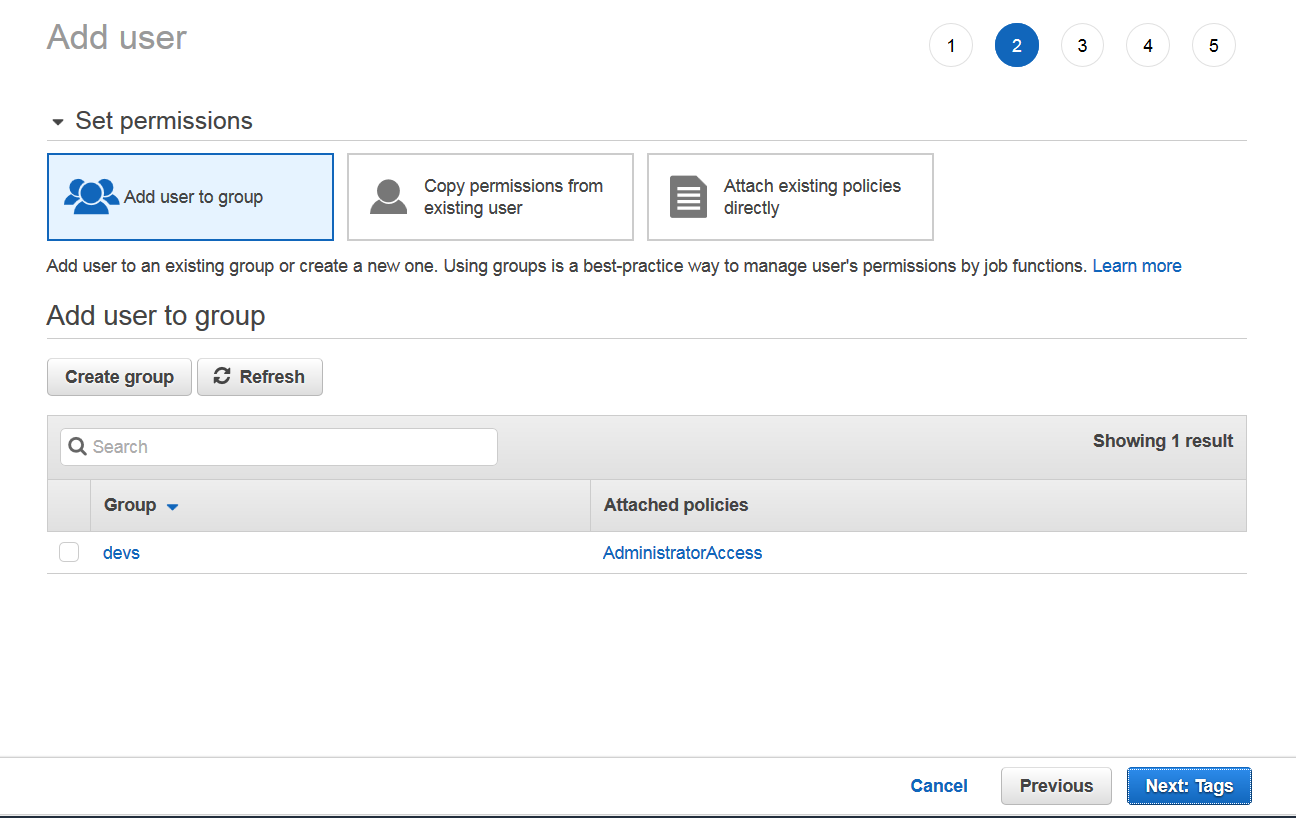

Next step is to add user to a group. You may create a new group here.

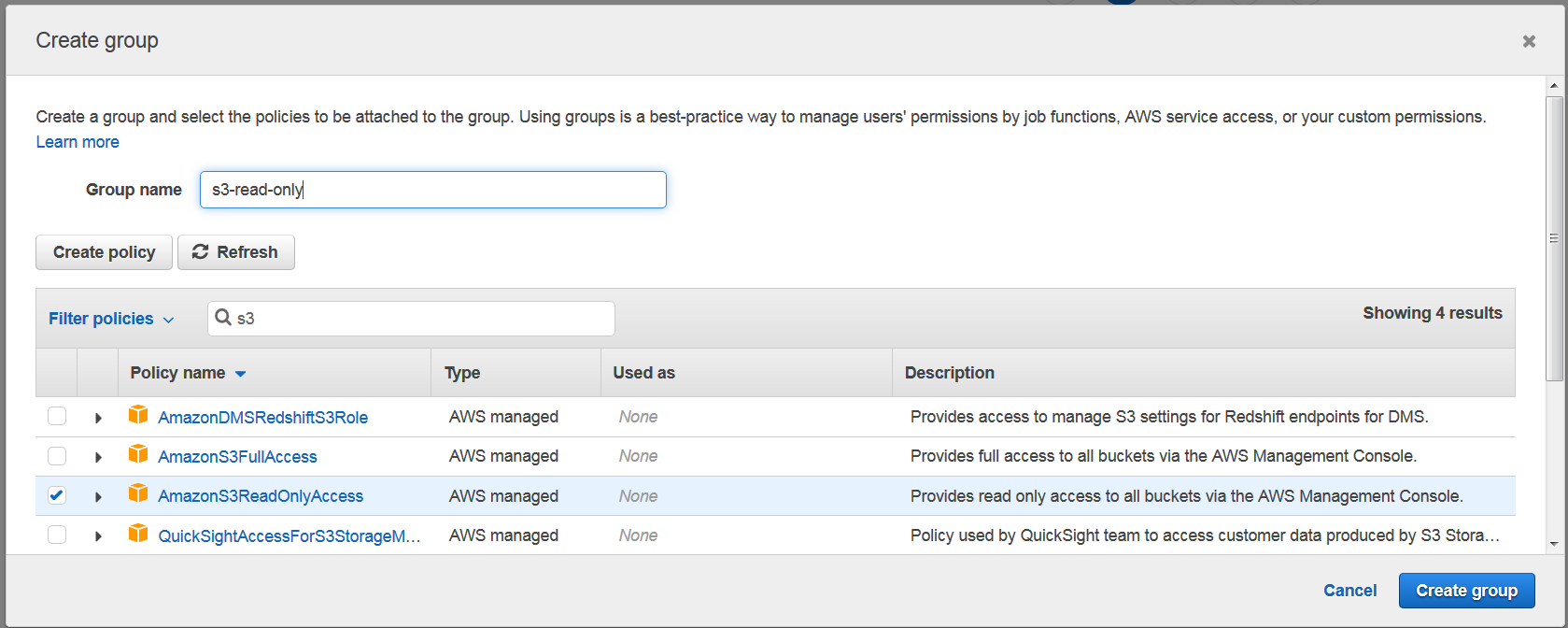

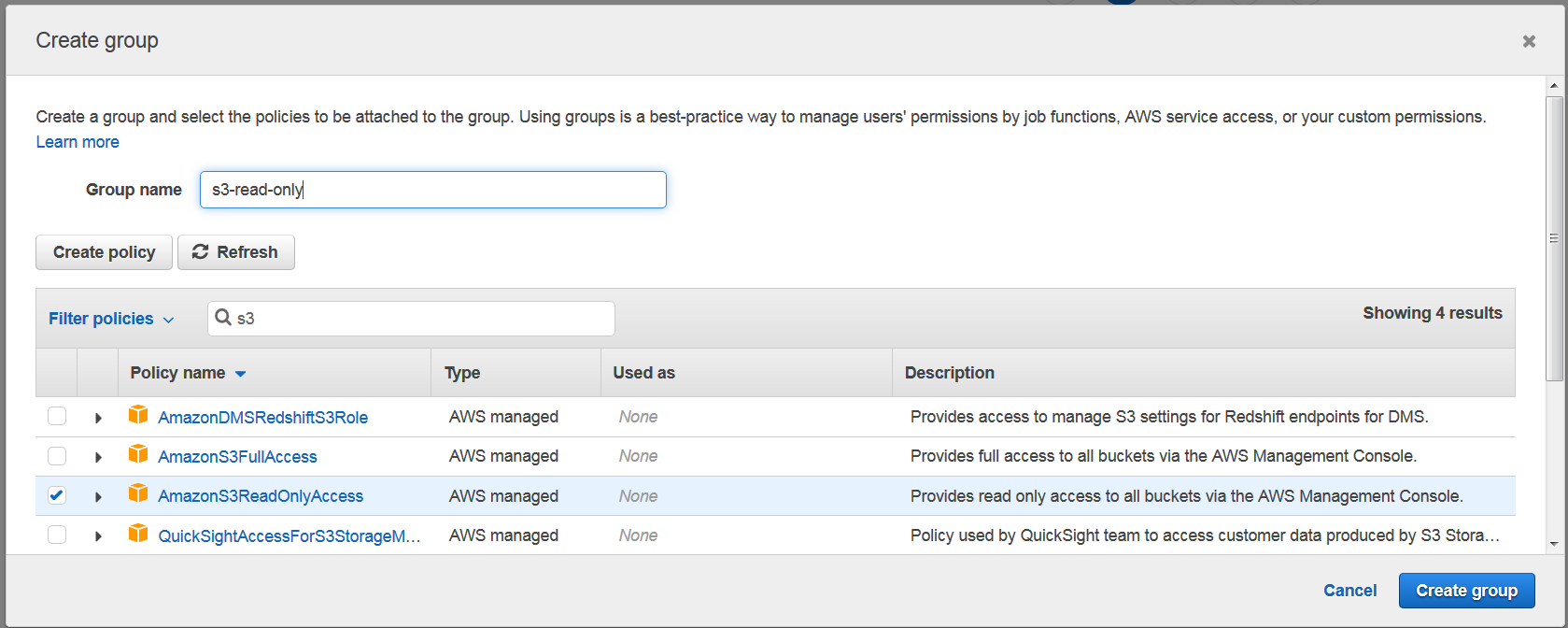

Click on create group button and a popup window will appear. Here you may want to select granular permissions for this group. For example, if you want user to have read only permissions to S3, you may create a group like this:

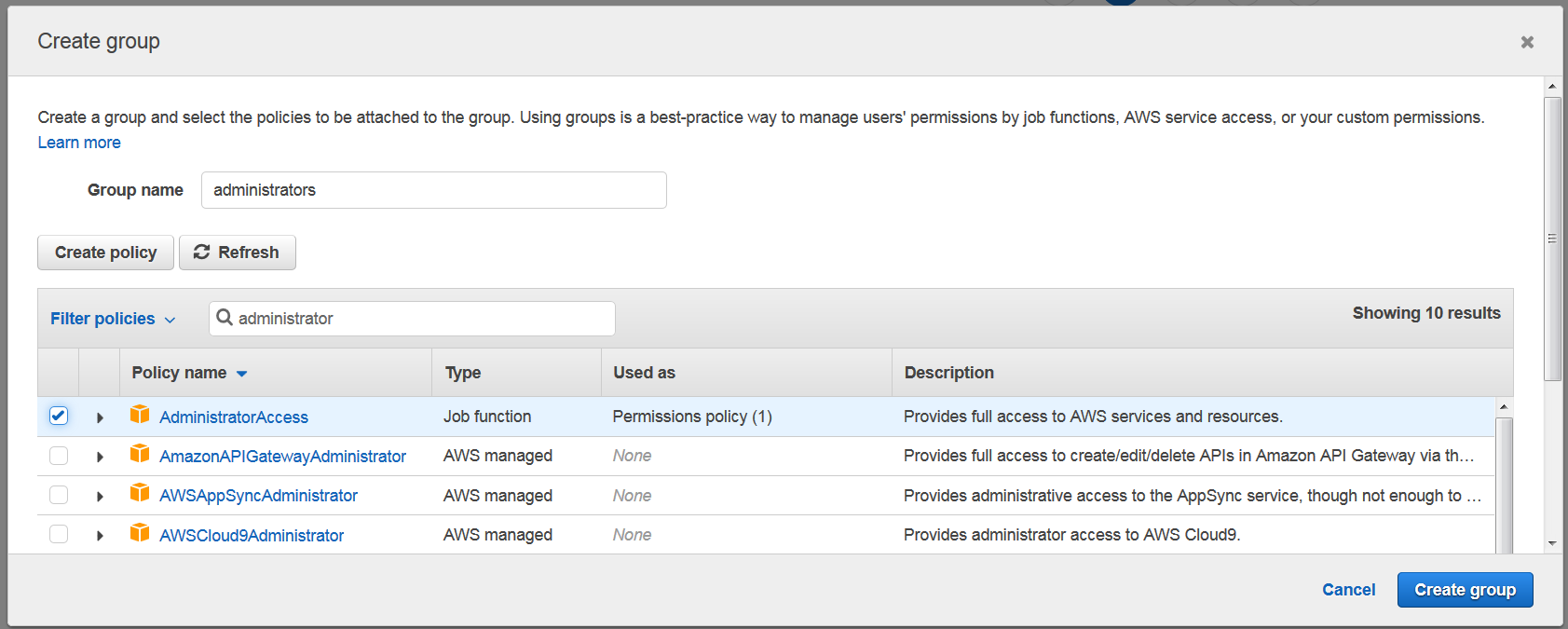

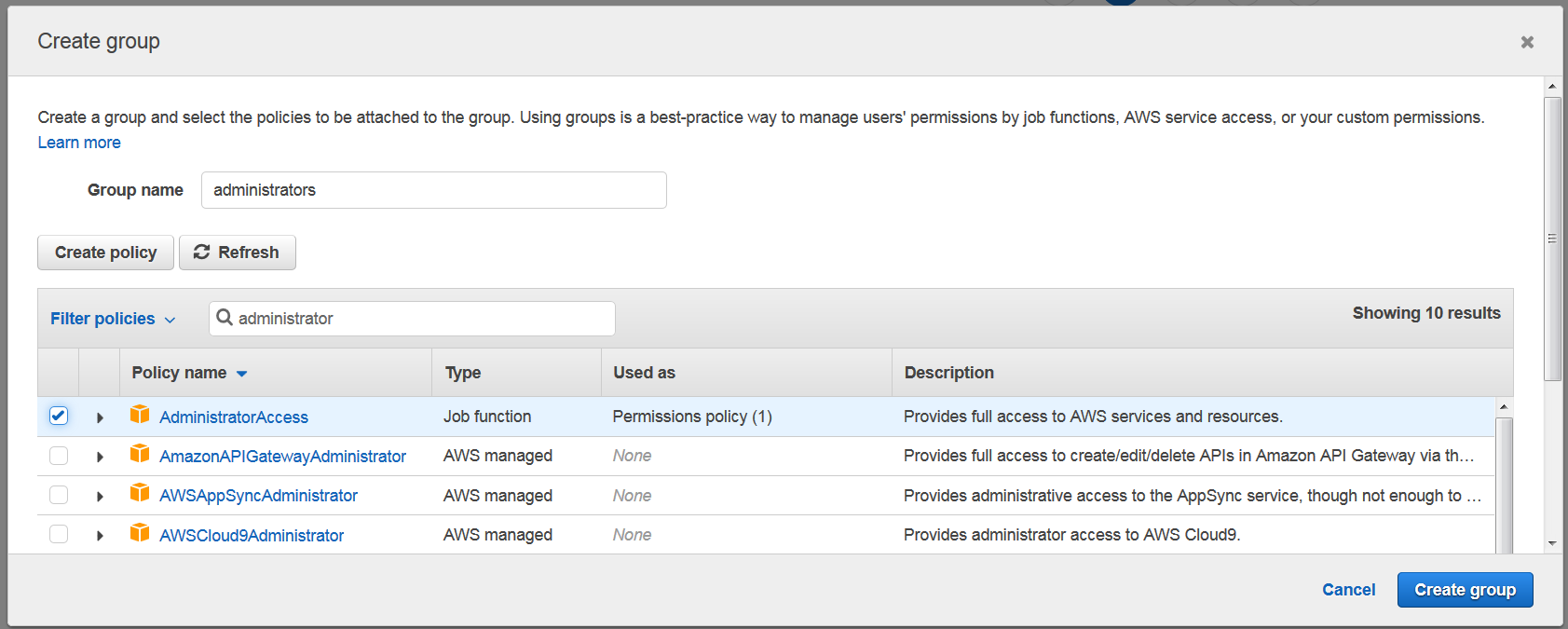

If you want to create group for administrators with all access, you may create a group like this:

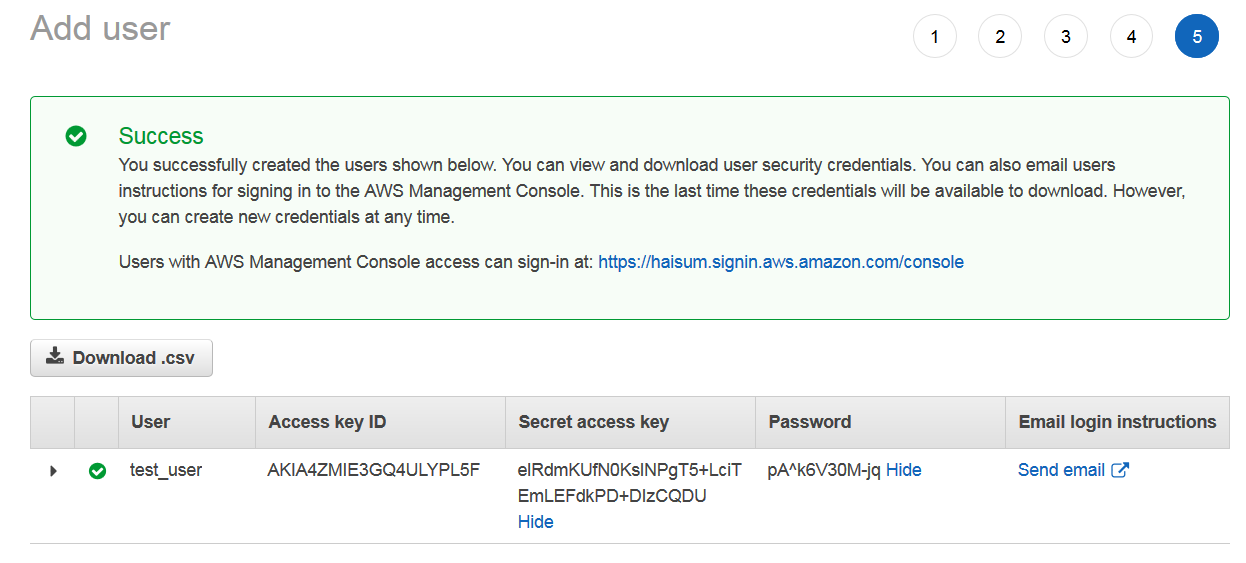

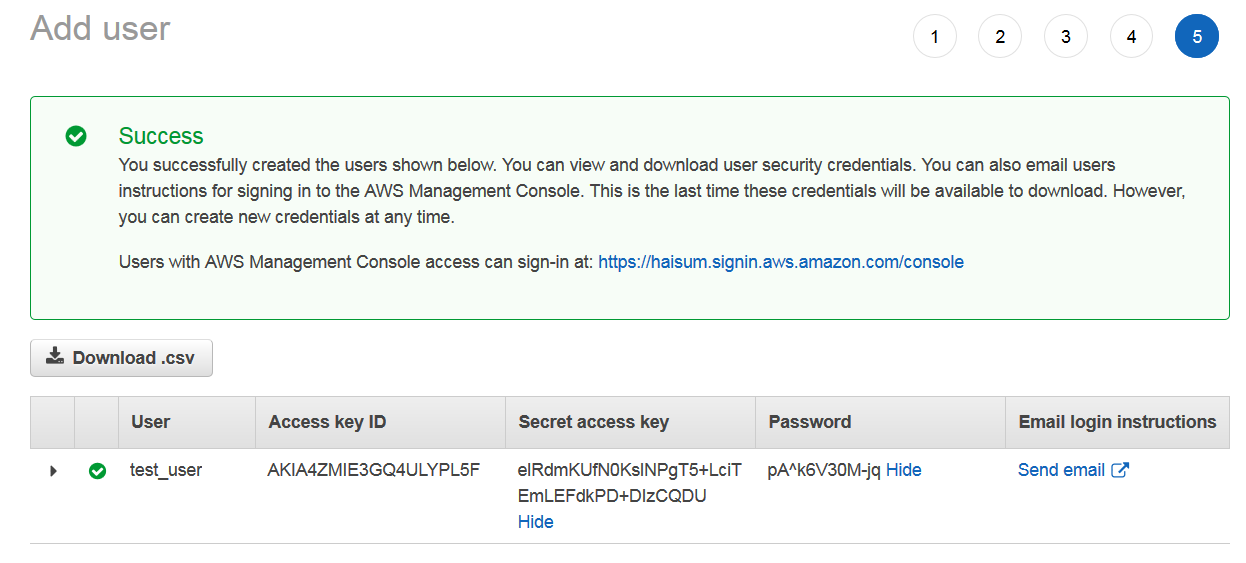

Next, you can add tags if you want to filter users according to some labels. Once user is created, you will get a success window with Access key, secret key and password. This information will only be presented to you once and can’t be retrieved again. So it’s good idea to Download.csv and share with user or send email.

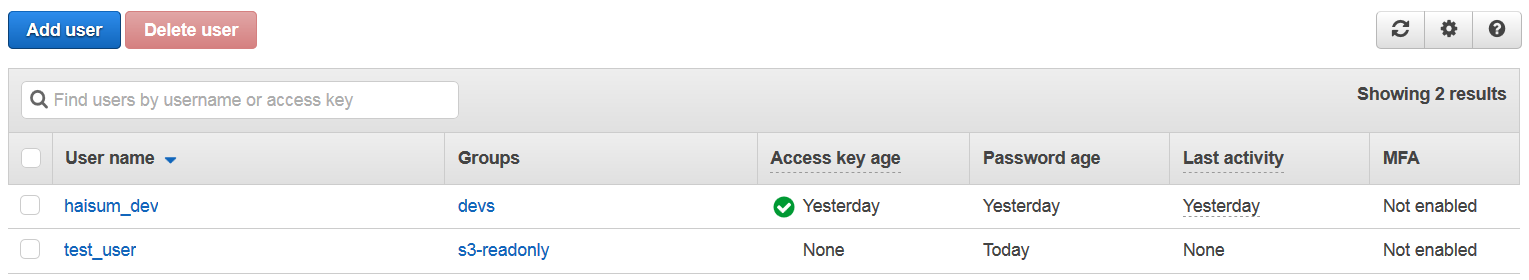

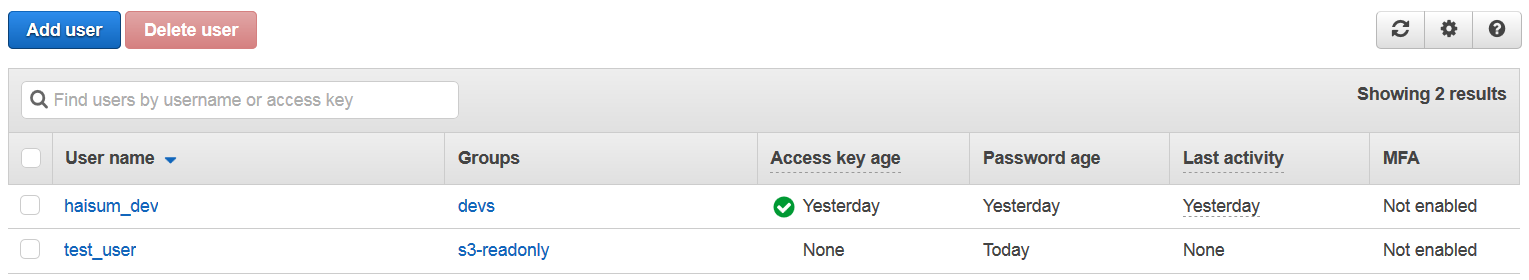

Once done, your user will start appearing in list of users.

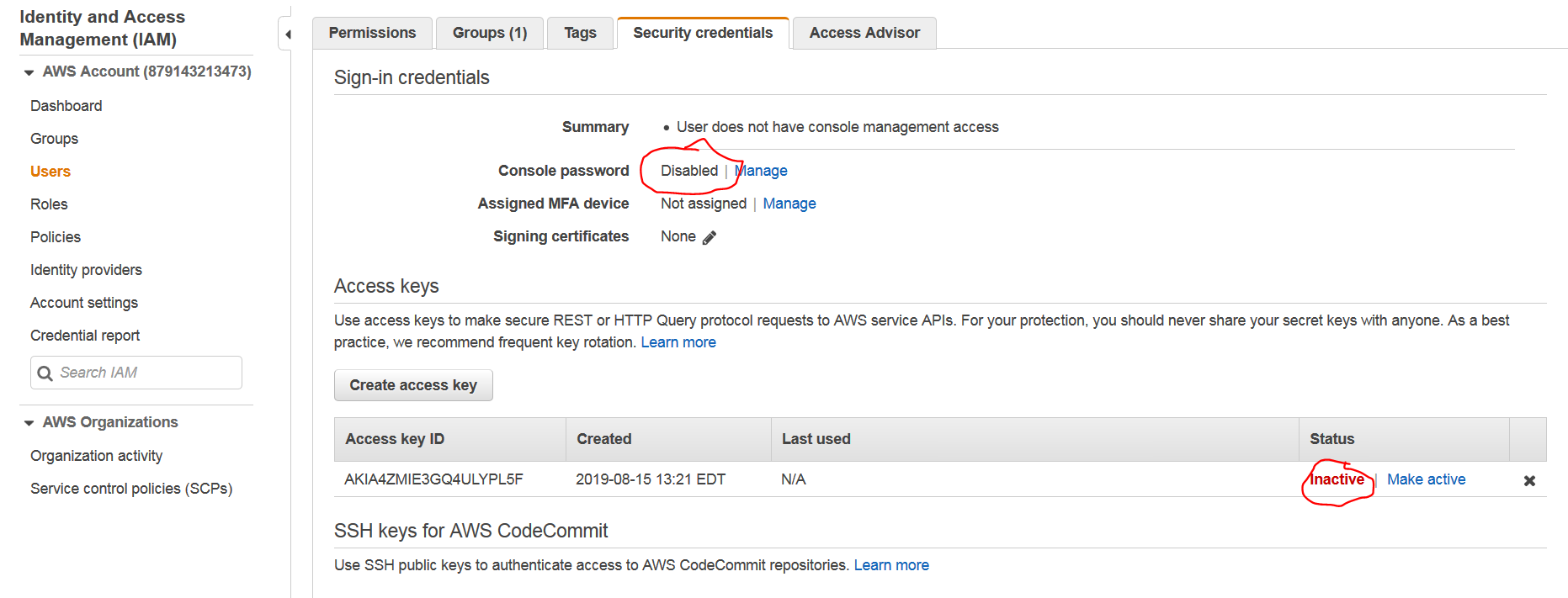

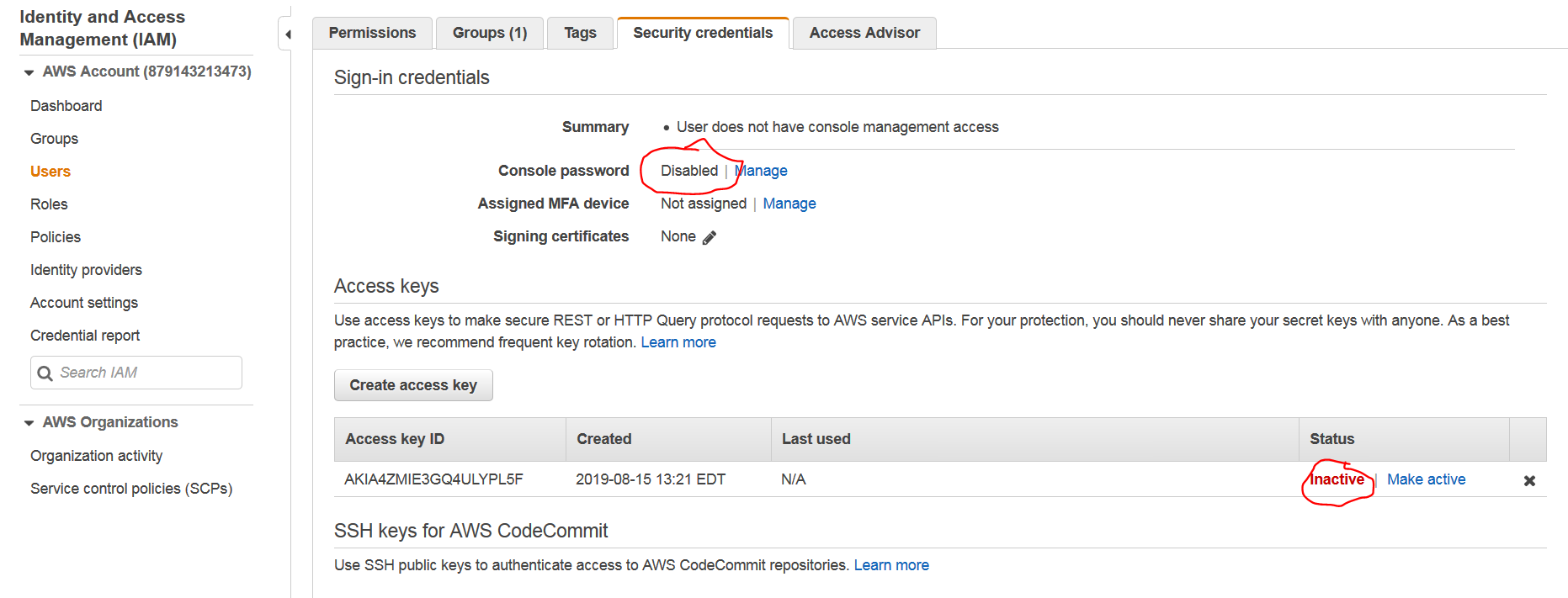

Since I shared password/access key here, I have disabled test_user and made access key inactive as shown below. You may disable/enable or recreate credentials of users in Security Credentials tab of user.

Once user is created, you can share User Sign In link for your AWS account with your users and then they can use this link to login using credentials you generated earlier.